Cloud Training

One click, one model size, one GPU class — and a trained model when you come back.

Local training is fine for small experiments. Production training wants more GPU than your laptop has, in less time than spinning up a cloud instance takes. Platform cloud training runs are managed: pick a model and a dataset version, click train, walk away. The artifacts come back the same shape as the train mode outputs you already know from local runs.

Launch a training run on Platform, configure the right defaults for your task, and pull the trained checkpoint locally.

Pick model size based on your latency budget (course 2 lesson 3).

Pick GPU class based on dataset size and patience.

Default hyperparameters are good — change only with a reason.

Pull the resulting

best.ptfor local validation.

Hands-on

Launch a cloud training run

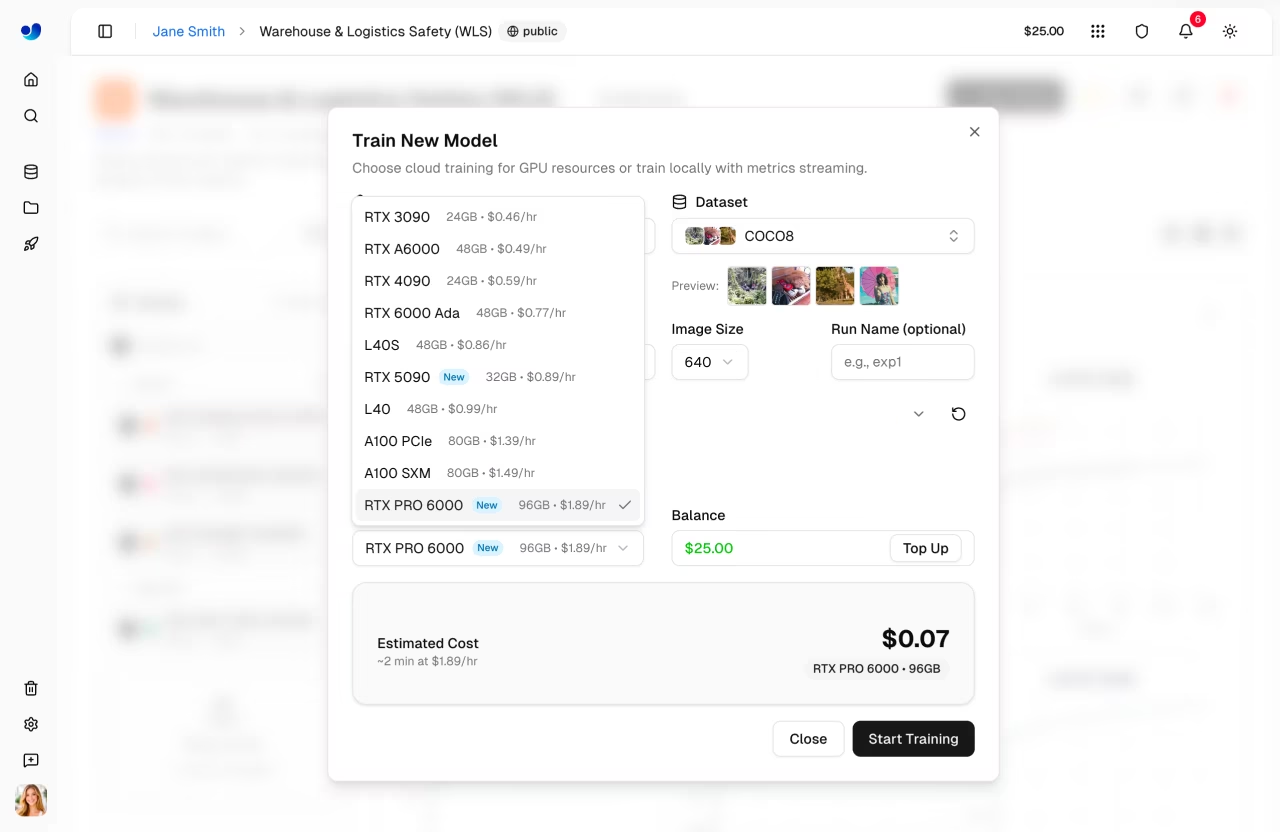

In the Platform UI: Train → New Run. Pick:

| Setting | Default | When to change |

|---|---|---|

| Dataset | (your dataset version) | Always pick a tagged version, never "latest" |

| Model | yolo26n | Larger only if benchmarks demand it |

| Epochs | 100 | Lower for tiny datasets, higher for very large ones |

| Image size | 640 | Increase for small objects, decrease for speed |

| Batch size | auto | Manually set to fit GPU memory or stabilize gradients |

| Learning rate | 0.01 | Lower (0.001) when fine-tuning from a previous best.pt |

| GPU class | T4 / A10 / L40S / H100 | T4 for cheap iterations, H100 for largest models |

| Run name | (auto) | Override with a descriptive name |

The defaults inherit from the train mode reference — the same flags you'd pass to model.train(...) locally. When you start fine-tuning a previous checkpoint, the transfer learning glossary entry covers the why behind the lower LR; for systematic sweeps, the hyperparameter tuning guide is the canonical reference.

Pick the GPU thoughtfully

| GPU | Cost per hour (relative) | Speed (relative) | Best for |

|---|---|---|---|

| T4 | 1× | 1× | Small datasets, yolo26n/s, learning |

| A10 | 2× | 2× | Default workhorse for yolo26m |

| L40S | 4× | 4× | Larger datasets and yolo26l/x |

| H100 | 8× | 6–8× | Very large datasets, fastest iteration |

Cost is roughly 2× per tier; speed is roughly 2× per tier. For most academy-scale projects, T4 or A10 is the right call. H100 is for when you're paying for engineer time more than GPU time, and is also where distributed training and mixed precision start to pay back the configuration overhead.

Watch the run live

Every run has a live dashboard:

- Training/val loss — should both descend.

- Per-class AP — updates per epoch.

- Sample predictions — visually verify the model is learning what you think.

- Resource usage — GPU utilization should be > 80%; lower is wasted money.

Platform alerts you if a run looks unhealthy: loss diverging, GPU starved (input pipeline bottleneck), or out-of-memory.

The first 5 epochs tell you most of what you need. If loss isn't descending or sample predictions look random, kill the run and investigate before burning cost. The metric to watch is val mAP — if it's flat after 5 epochs, something's wrong.

Pulling the model locally

Every Platform run produces a downloadable best.pt. The file is identical to what you'd produce locally with the same config.

ultralytics platform pull <run-id> # CLIfrom ultralytics import YOLO

model = YOLO.from_platform("forklift_v2_more_pallets") # by run name

model.export(format="onnx")You can now run inference, validate, and export exactly as in course 2.

Resume / fine-tune

Same patterns as local. Resume an interrupted run:

Train → resume run-XYZOr start a new run from a previous best.pt:

Train → New Run

Initial weights: <previous run's best.pt>

LR: 0.001What a healthy run looks like

After ~30 epochs of training a small model on a clean dataset, you should see:

- Train and val loss both descending (not diverging).

- Val mAP@0.5:0.95 climbing past 0.4.

- Per-class AP not too unbalanced.

- Sample predictions visually correct.

If any of those don't hold by epoch 30, the dataset usually needs work — not the hyperparameters. The next lesson on experiment tracking gives you the tools to compare runs and find the dataset change that fixed things, and the Comet integration shows how to mirror Platform metrics into a third-party dashboard if your team already lives there.

Launch a Platform run on your dataset with yolo26n for 100 epochs. While it trains, browse the live dashboard. Note GPU utilization — if it's < 70%, your input pipeline (image loading) is the bottleneck.

You've launched and completed a Platform training run.

You can pull the resulting

best.ptlocally.You've seen the live dashboard and watched at least one run end-to-end.

Real CV work is many runs, not one. Next: experiment tracking and choosing the best model.