Curate, Dedup, and Split

From a labeled pile to a clean train/val/test ready for training.

A labeled dataset isn't a usable dataset. Before training you need to clean duplicates, balance classes, set validation and test splits that don't leak, and version everything so you can reproduce a result months later. Platform datasets make most of this point-and-click — but the decisions are yours.

Curate a labeled dataset into train/val/test splits, balanced and dedup'd, and version it so the next training run is reproducible.

Dedup again post-labeling — labels can reveal duplicates that pixel hashes missed.

Stratify class distribution across splits.

Split by the right unit — scene, camera, day — to prevent leak.

Version the dataset; tag the version used by every training run.

Hands-on

Post-labeling dedup

Even after Discovery dedup, you'll find duplicates after labeling. Why? Because:

- Two visually similar frames with different labels are different (one missed something).

- Two visually different frames with the same labels are similar in label space.

Platform exposes both. The useful one for dataset quality is the second — it surfaces frames where the labels are essentially identical and the visual difference doesn't matter for training.

Class balance

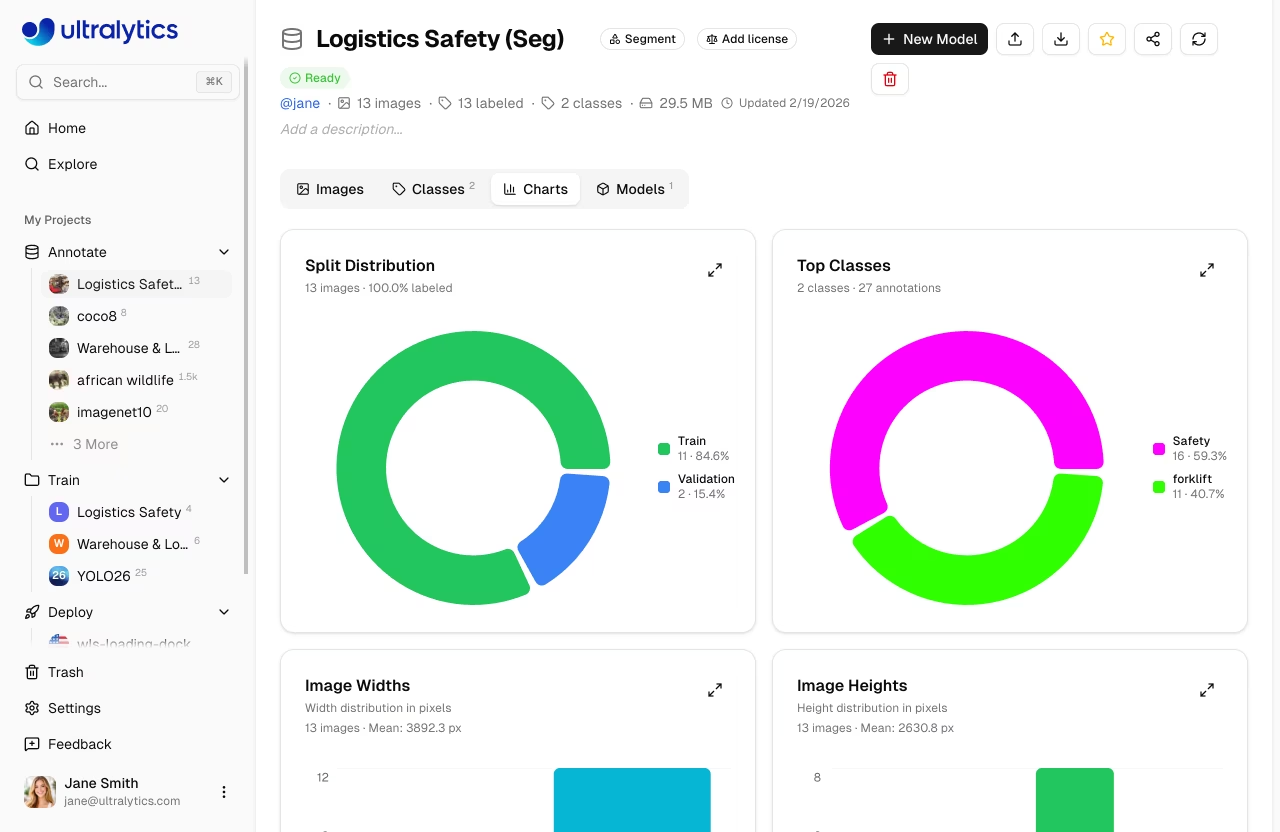

Look at a histogram of class counts:

forklift ████████████████████████ 800

person ████████████ 400

pallet ██ 70If your val/test splits are random and pallet only has 70 examples total, val might end up with 7 of them — too few for a meaningful AP. Two fixes:

- Collect more pallets. Always the better answer.

- Stratified split. Force class proportions to match across splits — Platform does this automatically when you ask for a stratified split. The class-imbalance discussion in the data-collection guide is worth a re-read.

For severely imbalanced classes you may also want oversampling at training time plus aggressive data augmentation — both are knobs in the YOLO trainer.

Split by the right unit

You learned this in Computer Vision Foundations: random per-image splits leak when frames are correlated. On Platform, you can split by:

- Image (default, dangerous for video data).

- Scene (the source video or capture session).

- Camera (group all images from camera-1 together).

- Day (all images captured on the same day).

- Custom tag — anything you tagged in lesson 2.

The right choice is the one that matches the deployment generalization you care about. If users will deploy a new camera that's never been seen — split by camera. If users deploy on the same cameras but on different days — split by day. For very small datasets, k-fold cross-validation — itself a flavor of cross-validation — is the right way to squeeze a meaningful signal out.

Once you've trained against a split, results are tied to that split. Changing the split changes every metric. Decide carefully and document.

Version the dataset

Every training run should be tagged with the exact dataset version it used. Platform versions automatically — every save creates an immutable snapshot you can reference forever. This is the Platform version of the reproducibility discipline behind training data.

v1 forklift_dataset @ 2026-04-01 — 1200 images, 3 classes

v2 forklift_dataset @ 2026-04-15 — 1800 images, 3 classes (+ pallets)

v3 forklift_dataset @ 2026-05-01 — 2400 images, 4 classes (+ scissor lift)A run logs which version it trained on; the version remains accessible by ID. The full datasets catalog follows the same convention — every published dataset has a stable identifier so notebooks and CI keep working.

Sanity checks before training

The 5-minute pre-training audit:

| Check | What you're looking for |

|---|---|

| Class histogram per split | Each class shows up in train, val, and test in similar proportions |

| Sample 20 train labels | Boxes look right; class names match what you expect |

| Visualize 5 from each split together | No obvious cross-contamination (same scene appears in two splits) |

| Total counts | Train ~70%, val ~15%, test ~15% of objects, not images |

The third one is the leak check — easy to do, easy to skip, expensive to discover later.

Promoting from raw to "production"

A useful Platform pattern: keep two dataset states.

- Working dataset. Where new annotations land. Sometimes messy.

- Production dataset. A version-tagged snapshot of the working dataset that has passed your audit. Training runs use the production dataset only.

This separation prevents "I trained on whatever was there at the time" — every run is reproducible, and pairs naturally with the active learning loop you'll run after each retrain.

Take your labeled dataset and run a post-labeling dedup. Then create a stratified train/val/test split by scene (or camera, depending on your data). Version it, then sanity-check class counts in each split.

Your dataset has stratified train/val/test splits.

Splits don't leak on the dimension that matters at deployment.

Each split has a representative class distribution.

You can identify the dataset version a future training run will use.

Clean dataset in hand — let's train it on Platform's managed cloud GPUs.