From the first image to the first model

Computer Vision Foundations

Build the vocabulary, intuition, and first hands-on reflexes for modern computer vision — tasks, datasets, metrics, and what they mean for a real product.

By Ultralytics Academy

Match a product need to the right vision task — classification, detection, segmentation, pose, or OBB.

Decide between bounding boxes and segmentation masks based on shape, cost, and downstream use.

Plan datasets and annotation guidelines that match deployment conditions.

Read precision, recall, and mAP together with examples to find the next improvement.

Recognize the difference between training, validation, and test data — and why people get this wrong.

A one-page task spec for a vision project: what the model predicts, what it ignores, what shape the output takes.

A dataset plan that lists splits, edge cases, label rules, and review criteria.

A small evaluation worksheet that converts metrics into a decision: ship, iterate, or rethink.

Basic Python familiarity is helpful but not required — this course is concept-first.

Comfort installing software locally if you want to follow the optional

ultralyticsinstall in lesson 10.

Course content

4 modules · 10 lessonsModule 1

Module 2

The Five Vision Tasks

Match an output sentence to classification, detection, segmentation, pose, or oriented bounding boxes.

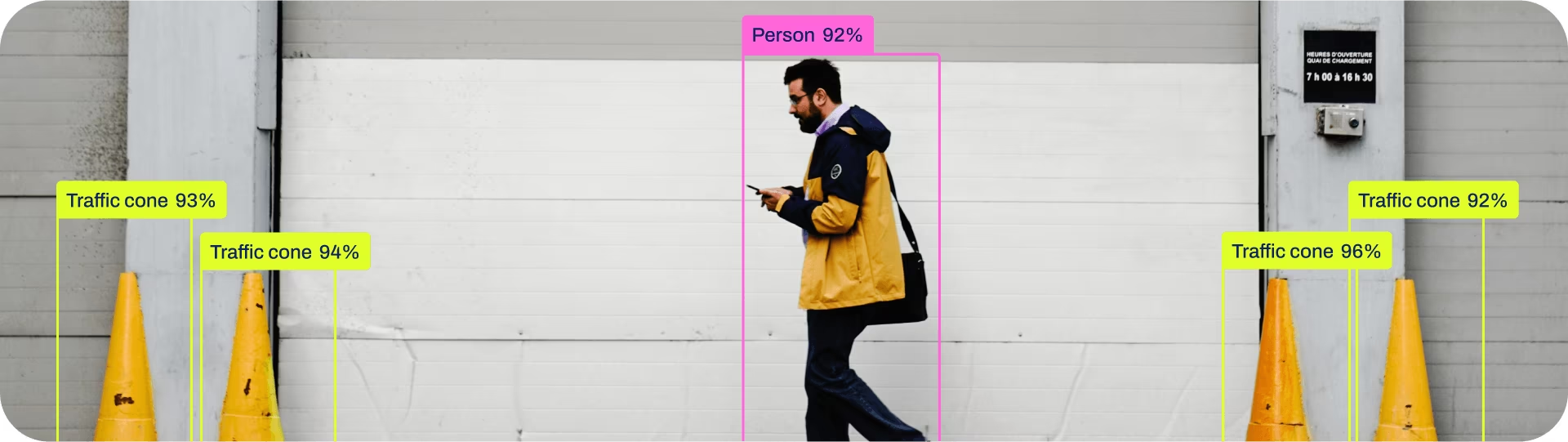

Understand Object Detection

Boxes, classes, and confidence — what detection actually returns and what it does not.

Boxes vs Masks: When You Actually Need Segmentation

Move from rectangles to pixels — and only when the shape genuinely matters.

Pose Estimation and OBB

Two specialized tasks and the kinds of problems where they shine.

Module 3

Module 4

Splits that Tell the Truth

Why training, validation, and test splits exist — and the leak that ruins half of them.

Reading Detection Metrics Honestly

Precision, recall, mAP — what they hide and how to look at them together.

From Lab to Production

The smallest end-to-end loop: install, predict, measure, decide.