Datasets that Reflect Reality

The model only knows what's in the dataset. Build the dataset like the model depends on it.

Most disappointing CV models aren't bad models — they're great models on a dataset that didn't match reality. The fix is rarely a bigger architecture. It's usually training data that looks more like what the model will see in production. Think of this lesson as the antidote to dataset bias before it bakes itself into your model.

Audit a dataset for distribution gaps before you train, and write a 5–10 item checklist of edge cases your dataset must include.

Sample your production environment first — then label.

Cover lighting, weather, occlusion, and camera differences.

Add hard negatives — backgrounds without the object — on purpose.

When you find a model failure, that failure becomes a labeled example.

Hands-on

Models learn the dataset, not the world

If you train on photos of cars on sunny streets and deploy in foggy parking garages at night, the model fails. Not because cars look different — they don't, much — but because the model never saw a car under those conditions and can't generalize. No validation accuracy can warn you about it, because the gap lives in a part of the world you didn't include — a problem unsupervised domain adaptation can sometimes paper over but rarely fully fix.

The fix is structural, not algorithmic — start with data collection and annotation.

The four axes to cover

Most failures cluster into four buckets. Cover them deliberately.

- Lighting. Dawn, midday, dusk, indoor fluorescent, harsh sun, overcast, headlights, glare. Models that worked perfectly in lab data crater when deployed at sunrise.

- Weather and atmosphere. Rain, fog, snow, dust. Even if your deployment is "indoor only," HVAC airflow patterns, condensation, and seasonal temperature swings change image stats more than people expect.

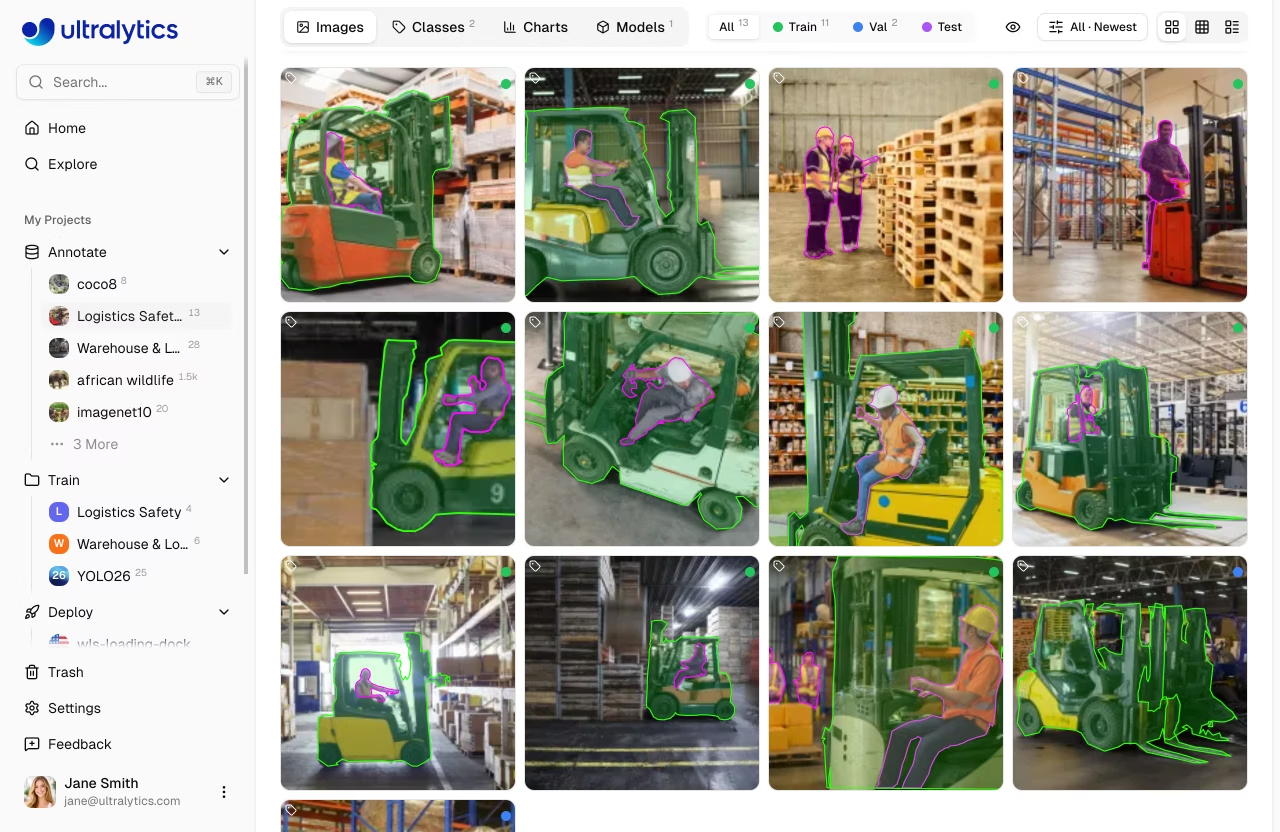

- Occlusion and clutter. Real scenes have objects in front of objects. Real warehouses have boxes in front of forklifts. Make sure your dataset has examples where the target is partly hidden by something benign.

- Camera variation. Different lenses, sensors, mount angles, image sizes, JPEG quality. A model trained on 4K cleanroom-quality images deployed on a 720p IP camera will misbehave.

A common, expensive mistake: collect 10,000 photos first, then realize they're all daytime. Sample from the deployment environment for a couple of weeks across the four axes before you spend a cent on labels. If real samples are scarce, synthetic data can help fill known gaps — but it's not a substitute for production-realistic captures.

The hard-negatives trick

Detectors and segmenters need examples of "no, that's not the thing" as much as examples of the thing. Specifically, they need hard negatives — backgrounds that look superficially similar to the target. They are also a cheap counterweight to data augmentation, which broadens what the model sees but can't add new failure modes.

For a forklift detector that's deployed in a warehouse:

- A pallet jack (similar shape, not a forklift).

- A scissor lift in the corner of the frame.

- A reflection of a forklift in a polished floor.

- An empty corridor with the same lighting as the corridors you usually see forklifts in.

Add these unlabeled — they have no boxes, just an image. The model learns "this is the world without the target."

A simple distribution audit

Before training, run this 5-minute data cleaning check:

| Question | What to look at |

|---|---|

| Are all classes equally represented? | Histogram of class counts. If one class is 10× rarer, expect bad recall on it. |

| Are all environments represented? | Cross-tabulate class × environment (camera, time of day, weather). Empty cells are gaps. |

| Are images visually similar to production? | Pull 10 random training images and 10 production samples. Show them to a teammate. Can they tell which is which? If yes, you have a domain gap. |

| Are bounding boxes consistent? | Spot-check 50 boxes. Inconsistent labeling looks like noise to the model. |

The output of this audit is a list of gaps — combinations you don't have data for. Those gaps become your collection plan. For benchmark coverage and inspiration, the COCO dataset and Open Images V7 are useful comparisons; for end-to-end labeling pipelines see the Roboflow integration.

Sketch a one-page audit table for your project. Rows = classes; columns = environments (lighting/weather/camera/occlusion). Mark which cells you already have data for. The empty cells are your priority list before training.

You can name the four axes of distribution coverage.

You've added at least one hard-negative category to your collection plan.

You've described the deployment environment in enough detail that a colleague could go collect more data without you.

Now that the dataset reflects reality, we'll talk about how to actually label it without going broke.