Boxes vs Masks: When You Actually Need Segmentation

Move from rectangles to pixels — and only when the shape genuinely matters.

Instance segmentation is what people imagine when they imagine "AI vision." It's also where teams burn the most annotation budget. The trick is to know exactly which downstream consumers need shape, and to give masks only to those consumers — and to keep bounding boxes for everything else.

Decide whether your project needs masks or whether boxes are sufficient — with a one-line justification.

Boxes give location. Masks give shape.

If a downstream metric uses area or boundary, you need masks.

Masks cost roughly 5× more to label than boxes.

When in doubt, start with boxes; upgrade later.

Hands-on

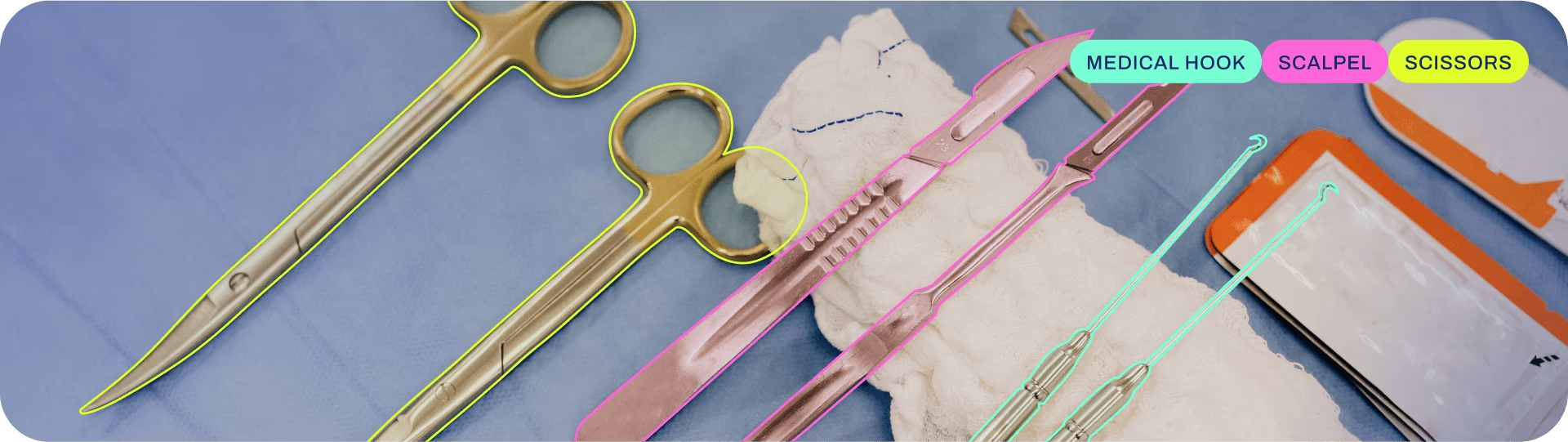

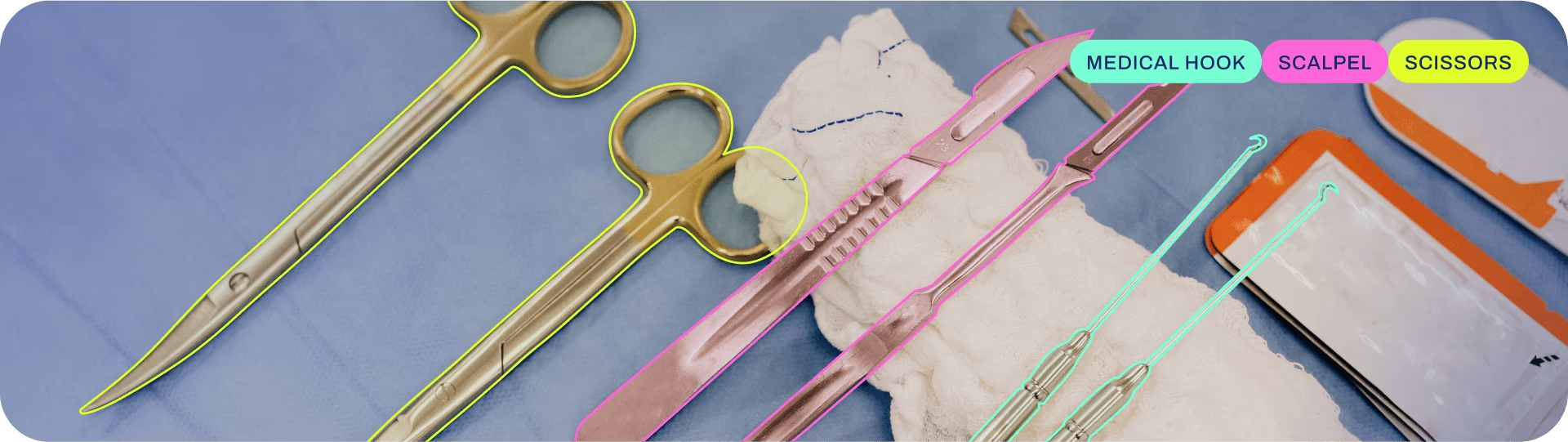

What instance segmentation predicts

For each detected object, the Segment task returns the set of pixels that belong to it — usually as a polygon or a binary mask the same shape as the image. You still get a class and a confidence; you also get shape. This is distinct from semantic segmentation, which only labels pixels by class without separating individual instances.

results = model("street.jpg")

for mask, cls in zip(results[0].masks.xy, results[0].boxes.cls):

# mask is an Nx2 array of polygon vertices

print(model.names[int(cls)], "polygon points:", len(mask))When masks earn their cost

Use masks when the answer to the user's question depends on shape, not just position. Concrete cases:

- Area or coverage: "What percentage of this field is covered in weeds?" Boxes can't measure area accurately when shapes are irregular.

- Occlusion handling: "Is this car partially hidden behind a tree?" Masks naturally describe the visible portion.

- Tight environment interaction: "Will this robot arm collide with the part?" Box hulls overstate the object; masks don't.

- Image manipulation: background removal, virtual try-on, AR effects — anything that needs to cut the object out. Algorithms in this family go back to Mask R-CNN.

When masks waste budget

If your downstream code only needs to:

- count objects,

- alert when an object enters a region,

- track an object frame-to-frame,

- run a downstream classifier on a crop,

… boxes are enough. Masks won't make any of these noticeably better, and they will cost you weeks of annotator time. Reach for YOLO26 segmentation only when shape really earns its keep.

Annotation rules tighten when shapes get involved

Boxes are forgiving — annotators eyeball the corners. Masks expose every disagreement. If two annotators draw the boundary of a "person carrying a bag" differently — does the bag count as part of the person? — your dataset has noise that no amount of training will fix. The COCO-Seg dataset is a useful reference for what consistent mask labels look like at scale.

Before any mask annotation begins, write a one-page set of boundary rules covering: occlusion, transparency (glass, water), shadows, partial objects at the frame edge, and "where does the bag end?" cases. Hand the rules to annotators and to the reviewer.

A mask quality review checklist

When you spot-check mask labels, look for:

- Bleed: mask extends a few pixels beyond the object (most common error).

- Holes: missed regions inside the object — usually because the annotator clicked once and moved on.

- Phantom edges: mask follows a JPEG artifact instead of the object boundary.

- Ambiguous parts: is the handle of the suitcase part of the suitcase mask?

A useful rule: if you can identify the object class from the mask alone (no image), the mask is doing its job. Once you have masks you trust, Predict mode returns them alongside the boxes for downstream code to consume.

Take any image with two or three objects in it. Imagine drawing a box around each, then a polygon around each. Estimate how much longer the polygons would take. Multiply by your dataset size. That's the cost of choosing masks.

You can name a downstream consumer in your project that does (or doesn't) need shape.

You've decided on boxes vs masks based on that consumer, not on which sounds fancier.

If you chose masks, you've at least sketched a one-page boundary-rules doc.

Next: pose, where the prediction isn't a box or a mask but a set of joints.