Splits that Tell the Truth

Why training, validation, and test splits exist — and the leak that ruins half of them.

If your model gets 95% on validation and 60% in production, your splits lied to you. There are predictable, well-understood ways to set up dataset splits that don't lie — and they connect directly to how you read training and test curves later. The trick is to do it before training, not after.

Set up training/validation/test splits that resist leakage and give an evaluation number you can trust.

Three splits — train, val, test.

Test set is touched once, at the end. Not for tuning.

Split by the right unit — usually scene, camera, or day, not by image.

Same image into two splits = leak = inflated metrics.

Hands-on

Why three splits

- Training set — the model learns from this.

- Validation set — you learn from this. Pick hyperparameters, decide when to stop, choose between two architectures.

- Test set — for the final number you report. Touch it once, after every other decision is made.

If you tune hyperparameters on the test set, your test number is no longer honest. The test set has effectively become a (very small, very noisy) training signal — a textbook recipe for overfitting and a worse bias-variance tradeoff.

The leak that ruins everything: same scene in two splits

The most common mistake: splitting by image rather than by scene or session. This is exactly the data cleaning failure that ruins half of academic and industrial benchmarks.

Imagine 1000 frames from a 30-second video. If you randomly assign 80% to training and 20% to validation, frames from the same second land in both splits. The model sees frame 99 in training and frame 100 in validation — they're nearly identical. Validation accuracy looks great. Production accuracy on a new scene is terrible.

The rule:

The splits must be independent on the dimension that matters at deployment.

For most CV projects, that means splitting by:

- Scene if your data is video clips,

- Camera if you have a camera fleet,

- Session / day if scenes change over time,

- Subject for medical or sport data — patient X is in one split, never two.

# WRONG: random per-image split, frames from the same video leak across

import random

random.shuffle(images)

train, val = images[:8000], images[8000:]

# RIGHT: split by scene, then collect images

scenes = list_scenes(images) # group images by scene_id

random.shuffle(scenes)

train_scenes = scenes[:int(0.8 * len(scenes))]

val_scenes = scenes[int(0.8 * len(scenes)):]

train = [img for s in train_scenes for img in s.images]

val = [img for s in val_scenes for img in s.images]If your data has any temporal or spatial correlation — frames from a video, frames from a fixed camera, photos taken on the same day — random per-image splits will inflate your metrics. Always split by the unit that matters.

Class balance across splits

If 5% of your data is class forklift and a random split puts 0% in validation, your metric will be meaningless for that class. Use stratified splits so each class shows up in similar proportions in train and val. The class imbalance guide goes deeper on rebalancing strategies.

Most libraries (sklearn.model_selection.StratifiedShuffleSplit, similar utilities in YOLO tooling) do this for you. The key is to remember to ask for it.

A test set with bite

A test set should be:

- Drawn from production-realistic conditions (the four axes from lesson 6).

- Frozen. Don't change it once you start running models against it.

- Big enough that a 1% accuracy difference is statistically meaningful — for detection, usually a few thousand objects per class. The Ultralytics Validation mode is the cleanest way to score a frozen test set without contaminating training.

- Touched once. If you've reported a test number, that experiment is over. The next test number comes from a fresh round of training.

When to use cross-validation

For small datasets (< a few thousand images), a single train/val split is unstable — your val accuracy depends heavily on which 200 images happened to land in val. Use k-fold cross-validation to average over k different splits, and read the cross-validation glossary entry if the mechanics are new to you.

Cross-validation isn't free — it costs k× the training time — but for early experimentation on small data it's the only honest way to compare two models.

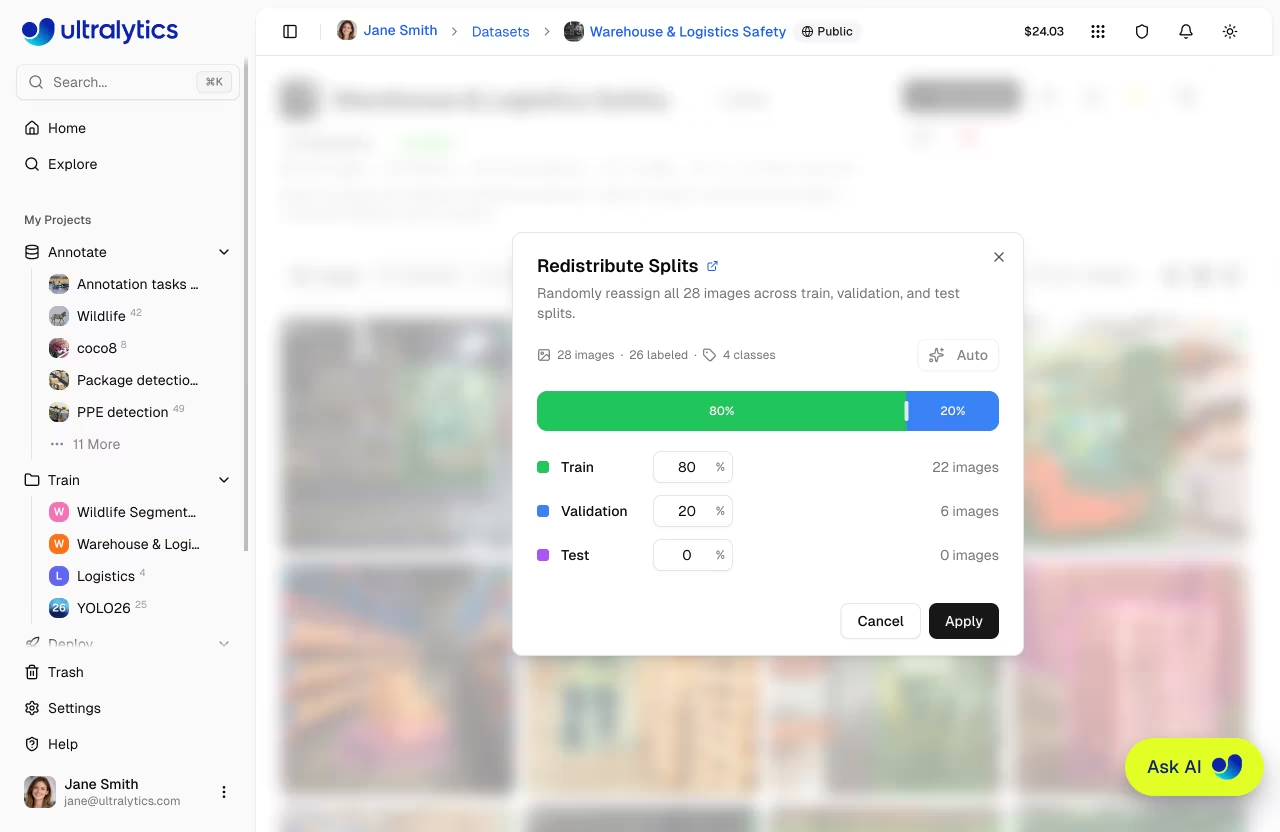

Look at your current dataset. Identify the unit that should never appear in two splits (scene, camera, day, patient). If you've already split, check whether any unit landed in both. If yes, you have a leak.

You've identified the right unit to split by for your project.

Your splits don't leak — same scene/camera/day doesn't appear in two splits.

Your test set is frozen and you've decided not to tune against it.

Honest splits give honest metrics. Now we'll learn to read those metrics without being fooled.