Understand Object Detection

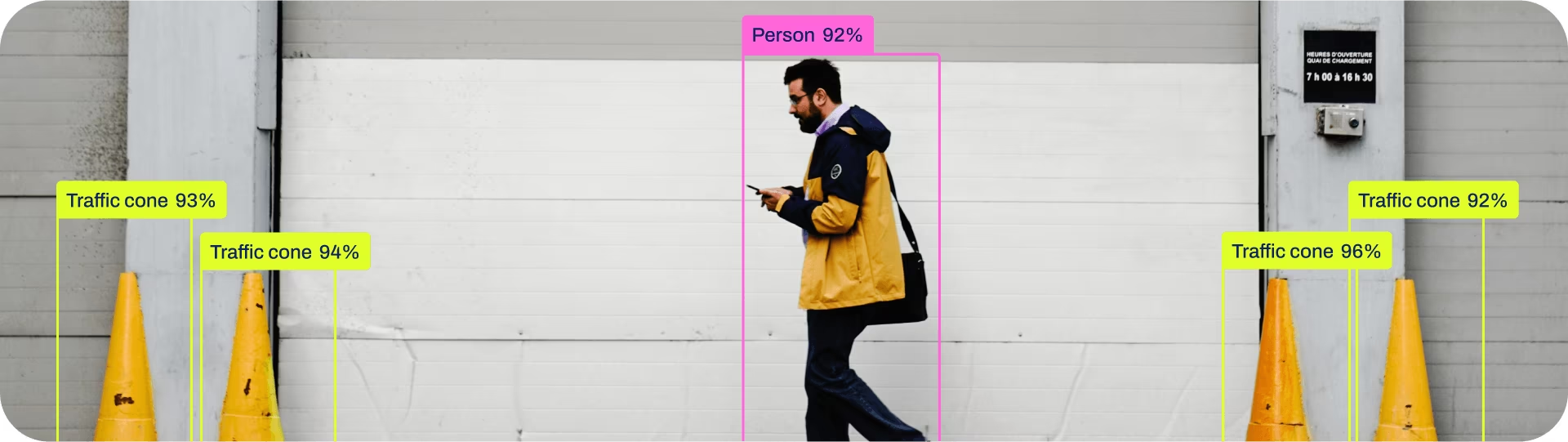

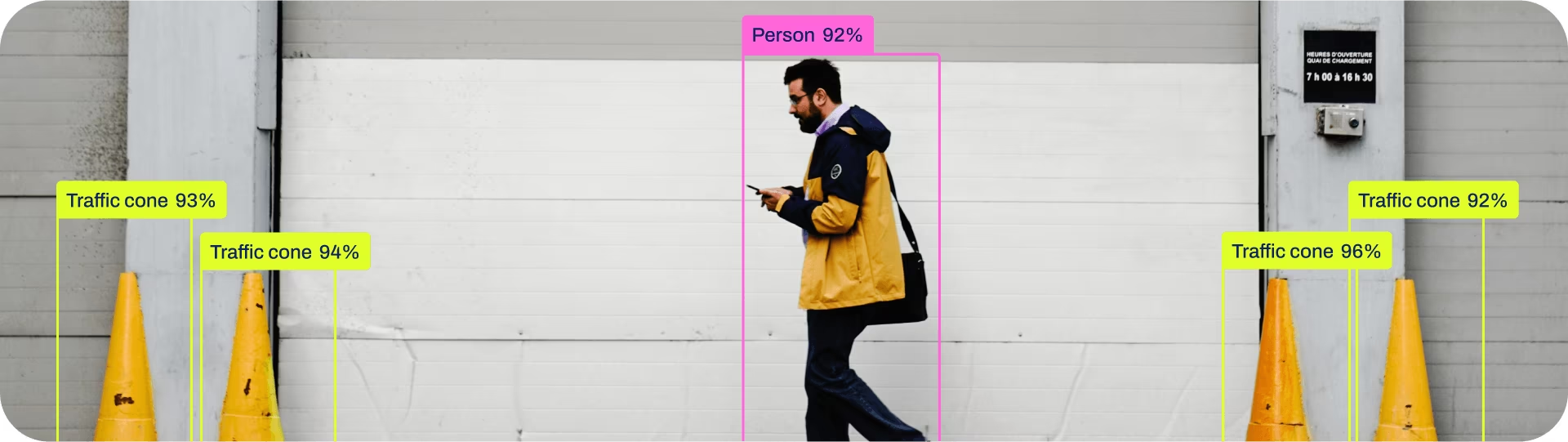

Boxes, classes, and confidence — what detection actually returns and what it does not.

Object detection is the workhorse. Most production CV systems are detection systems with extra logic on top — counters, trackers, alerters. If you understand exactly what a detection model returns and what it doesn't — class, box, and confidence — you can build almost anything.

Describe a detector's output in terms of classes, boxes, and confidence scores, and name two downstream uses each one enables.

A detection is

(class, x1, y1, x2, y2, confidence).Confidence is per-box, not per-class.

Two boxes for the same object happen — that's what NMS is for.

A correct class can sit on a poor box. Evaluate both.

Hands-on

What comes out of the model

A detection model returns a list. Each item has:

- Class — an integer index that maps to a name (e.g.

0 → person,2 → car). - Box — four numbers:

x1, y1, x2, y2(top-left and bottom-right pixel coordinates). - Confidence — a number between 0 and 1 measuring how sure the model is.

That is the whole API. Everything else — counting, alerting, tracking, masking sensitive regions — is code you write that consumes this list.

results = model("warehouse.jpg")

for box in results[0].boxes:

cls = int(box.cls[0]) # class index

conf = float(box.conf[0]) # confidence

x1, y1, x2, y2 = box.xyxy[0] # corners

print(f"{model.names[cls]} @ {conf:.2f}: ({x1:.0f},{y1:.0f}) → ({x2:.0f},{y2:.0f})")Confidence is not class probability

A common confusion: confidence ≠ "the model is X% sure this is a forklift." It's closer to "the model is X% sure something is here and its best guess for the class is forklift." Most modern anchor-free detectors — including YOLO26 — bundle the two into one score; for some older models they're separate.

The practical consequence: a single confidence threshold may be too coarse if your classes differ in difficulty. You can apply different thresholds per class — see Predict mode for the full list of inference arguments.

Duplicate boxes and NMS

Detectors propose many candidate boxes per object. Non-Maximum Suppression (NMS) removes overlapping duplicates of the same class, keeping the highest-confidence one. NMS has one important knob, the IoU threshold, that decides "how much overlap counts as a duplicate."

If your model misses one of two objects standing next to each other, the bug may not be the model — it may be NMS deduplicating two real objects. Lower the IoU threshold (e.g. iou=0.5 → iou=0.7) and the second one comes back.

Two ways to be wrong

A detection is wrong in two distinct ways:

| Failure | What's broken | Fix |

|---|---|---|

| Right class, bad box | Localization | More data, larger imgsz, or rethink the labeling rules for tight crops |

| Wrong class | Classification | More examples of the confused class, or a class merge if the distinction isn't real |

A useful diagnostic loop is to look at low-confidence true positives and high-confidence false positives. They tell you very different things.

Picking a confidence threshold

The default conf=0.25 is sane but rarely optimal. Pick it deliberately based on which mistake is more expensive:

- Safety / alerts: false negatives are expensive — accept some false positives — lower the threshold. This is exactly the tradeoff in warehouse safety monitoring.

- Search / recommendation: false positives are visible to users — raise the threshold.

- Counting / metrics: balance — sweep a range and pick the threshold that minimizes count error on your validation set.

Open any image with model.predict(image, conf=0.1) and look at the boxes. Sort them by confidence. Find one true positive at confidence 0.15 and one false positive at confidence 0.6. Both will exist. That's your evidence that confidence is informative but not a free lunch.

You can list the three pieces of a detection output.

You can explain why two correct detections might be deduplicated by NMS.

You can reason about whether your project should accept more false positives or more false negatives.

Show solution

from ultralytics import YOLO

model = YOLO("yolo26n.pt")

results = model("https://ultralytics.com/images/bus.jpg", conf=0.25, iou=0.7)

for box in results[0].boxes:

print(model.names[int(box.cls)], float(box.conf), box.xyxy.tolist())Detection is fast and cheap. Next we'll look at when you really need the next step up: masks.