The Five Vision Tasks

Match an output sentence to classification, detection, segmentation, pose, or oriented bounding boxes.

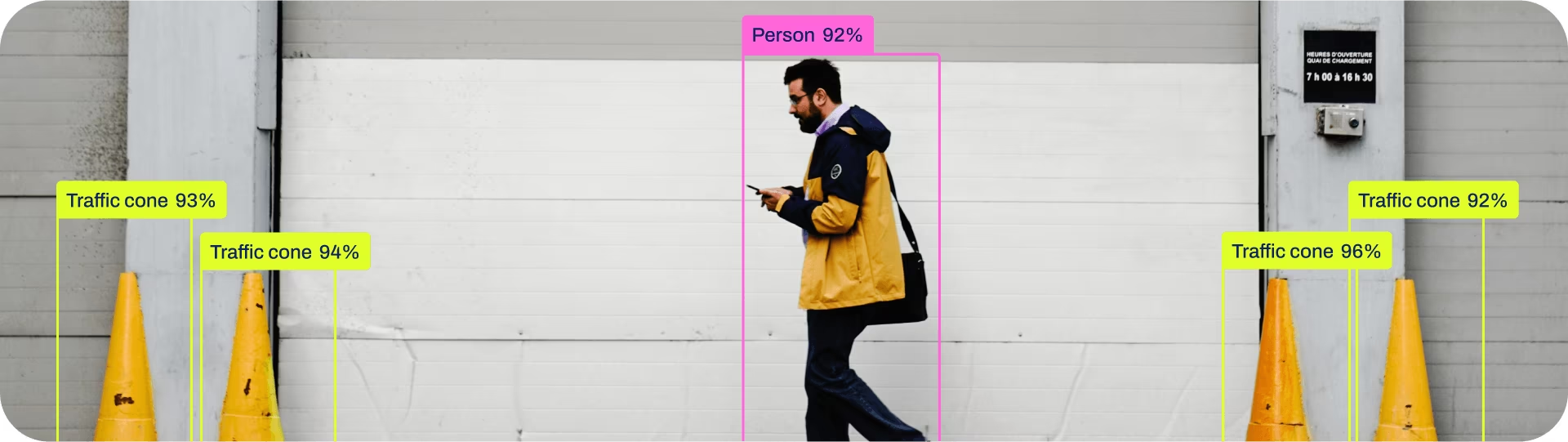

There are roughly five vision tasks you'll meet in production: classification, detection, segmentation, pose estimation, and oriented bounding boxes. They are not interchangeable. The wrong choice doubles your annotation budget or turns a 95% model into a 60% one. Pick deliberately.

Match a project's output sentence to one of the five tasks and explain why in one line.

Image-level decision? Classification.

Where and what (rough shape OK)? Detection.

Exact shape, area, or boundary? Segmentation.

Joints, keypoints, body language? Pose.

Rectangular things at any angle (ships, vehicles from above, packages on a belt)? OBB.

Hands-on

The five tasks at a glance

| Task | What you predict | When it's the right answer |

|---|---|---|

| Classification | One label per image | "Is this image of an X?" — no localization needed |

| Detection | Class + axis-aligned box per object | "Where are the X's?" — counting, presence, rough location |

| Segmentation | Class + pixel mask per object | "What's the exact shape?" — area, occlusion, boundaries |

| Pose | Set of keypoints per person/object | "What is the body doing?" — fall detection, ergonomics, sport |

| OBB | Class + rotated rectangle | "Where, at what angle?" — aerial, top-down, conveyor |

Detection vs segmentation: the most common mistake

Most teams reach for segmentation when detection would do, then run out of annotation budget. Mask annotation costs 3–10× more than box annotation per object. The right question to ask:

Does my downstream code need to know the shape of the object, or just where it is?

If you're counting cars in a parking lot, bounding boxes are fine. If you're computing the painted area of a wall, you need a mask.

"Masks just in case" is a budget trap. Mask labels are slower to draw, slower to review, and need stricter rules for occlusion and edges. Start with boxes, add masks only when a real downstream consumer needs the shape.

Pose vs detection

Pose returns keypoints — coordinates of joints (shoulders, hips, knees) or fixed points on an object. You use it when posture is the signal: a person fallen on the ground is in a very different pose than one walking past — a kind of action recognition.

You don't need pose to answer "is there a person here?" — that's just detection. Use pose when the body language is the prediction.

OBB vs detection

A regular detection box is axis-aligned: edges parallel to the image sides. An OBB rotates with the object. The classic OBB use cases are top-down or near-top-down imagery — aerial photos, satellite images, conveyor belts — where objects don't naturally line up with the frame.

Detection box (axis-aligned) OBB (rotated)

┌─────────────────┐ ┌───────┐

│ ▲ │ \ \

│ ╱ ╲ │ → rotated object → \ \ ← box hugs the actual shape

│ ╱ ╲ │ \___\

│ ╱_____╲ │ (fits)

└─────────────────┘

(lots of empty space) (tight)If you're seeing big detection boxes that are mostly empty, that's a sign OBB is a better fit.

Choosing in practice

Walk through the questions in order. Stop at the first "yes."

- Is the output the same for every pixel in the image? → Classification.

- Do you need to count, locate, or filter objects, but not draw them? → Detection. Add object tracking on top if you need cross-frame identity.

- Do you need the exact shape, boundary, or area? → Segmentation.

- Is the posture of the subject the signal? → Pose.

- Are objects rotated relative to the frame? → OBB.

Take the output sentence from lesson 1. Walk down the five-question ladder and stop at the first "yes." That's your task. Write why you stopped there in one line — that one line is what you'll defend in a design review.

You've named one task — classification, detection, segmentation, pose, or OBB — for your project.

You can explain why the next task on the list is overkill.

You haven't accidentally picked segmentation just because it sounds more impressive than detection.

We'll go deep on object detection — the workhorse task and the one most people start with.