Annotation Quality

Half a million boxes are worse than fifty thousand consistent ones.

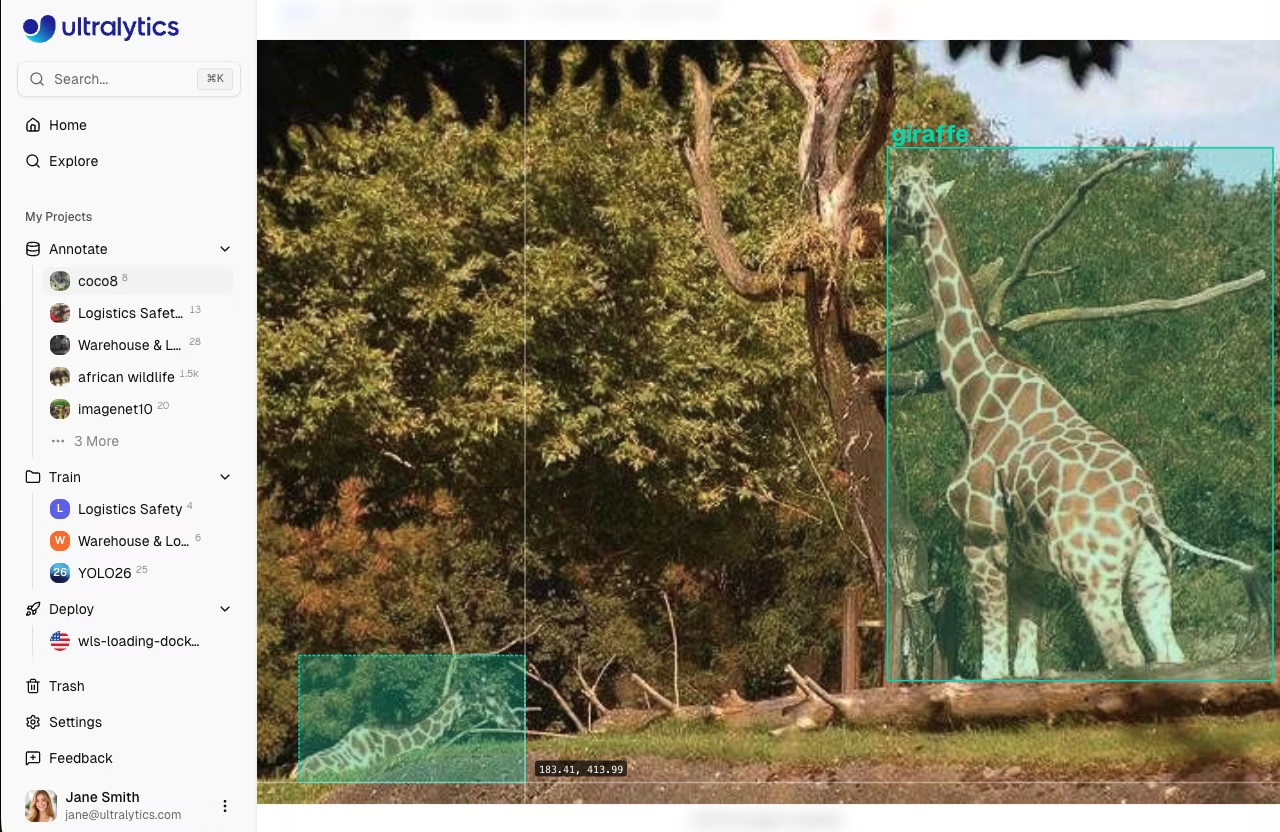

If a model trains on noisy labels, it learns the noise. Annotation quality is the single highest-leverage thing you can fix once you have the right task — and it's almost always cheaper to relabel than to rearchitect. Tools like the Ultralytics Platform and Roboflow exist precisely to make this loop fast.

Write an annotation guideline that survives contact with twenty annotators, and a review loop that catches drift before it bakes into the dataset.

One-page guideline with images, not just text.

Calibration round before scale: same 50 images labeled by everyone.

Reviewer accepts/rejects, not corrects.

Track inter-annotator agreement; investigate when it dips.

Hands-on

Why labels go bad

Annotators don't label badly because they're careless. They label badly because the rules are ambiguous and they make consistent guesses — different ones from each other. The model sees this as noise that no data cleaning pass can fully remove.

Common ambiguities that show up in real annotation work:

- Where does the box stop? Does it include shadow? Bag? Held child? See the bounding box glossary for the geometric basics.

- Truncation. Is half a person at the frame edge a "person"? At what threshold?

- Occlusion. A car behind a fence is still a car. A car visible only as a wheel is… what?

- Class overlap. Is a pickup truck a "car" or a "truck"? Is a kid scooter a "scooter" or "other"? Plan for class imbalance when you decide whether to merge or split.

Each of these is fine — as long as everyone makes the same choice. The job of the annotation guideline is to make the choice for everyone.

A useful guideline

A guideline that works in practice:

- One page, no more. If it's longer than one page, annotators won't reread it after the first day.

- Examples for every rule. "Include the head" with a labeled image beats "include the head" alone.

- Edge cases up front. Truncation, occlusion, partial objects — handled in the first paragraph, not at the end.

- A small "do not label" section. Reflections, paintings of cars, cars on TVs.

## Class: forklift

Include:

- Forklifts visible by at least 30% of their body.

- Forklifts in motion or stationary.

- Forklifts of any size or color.

Do not label:

- Pallet jacks (manual or electric, no overhead structure) → class `pallet_jack`.

- Scissor lifts → class `other`.

- Reflections of forklifts in glass or polished floors.

- Forklifts in printed safety posters.

Box rules:

- Include the entire visible silhouette including the mast.

- Do not include the load (pallet, boxes) — those have their own classes.

- For partly occluded forklifts: draw the box around the visible part only.The calibration round

Before you scale annotators from 1 to 20, run a calibration round: hand the same 50 images to every annotator and compare their labels. This is the practical version of tracking inter-rater reliability — including polygon agreement for segmentation work — before a labeling process gets expensive.

- If two annotators disagree on > 10% of objects, the guideline isn't crisp enough — fix it before scaling.

- Save the calibration set. Use it later as a fast sanity check when onboarding a new annotator.

A reviewer who silently fixes labels removes the feedback signal. Annotators don't learn what they got wrong. Reject and explain — then it's the annotator who fixes it. Slower in the moment, much faster over a thousand images.

Track agreement, not just throughput

Per-annotator metrics most teams should be tracking:

| Metric | What it tells you |

|---|---|

| Throughput (boxes/hour) | Speed — capacity planning |

| Reject rate (% of work that the reviewer sends back) | Quality drift |

| Inter-annotator agreement (Cohen's κ on overlap set) | Are annotators converging? |

When inter-annotator agreement drops, something changed — a new annotator, an ambiguous batch, fatigue. Investigate before that batch contaminates the dataset.

Active relabeling

When you find a model failure mode, add those exact images to the relabel queue. The dataset improves on the axis that matters. This is the loop that active learning automates — and it's where most production CV improvement actually comes from. Not bigger models, but tighter labels in the places the model fails today, surfaced through tools like the Dataset Explorer or your favorite labeling integration.

Take 10 of your project's images and write the annotation guideline for them — one page, with examples. Hand it to a teammate without explaining. Have them label one image. The disagreements with the guideline in front of them tell you exactly where the guideline is unclear.

You have a one-page guideline with examples.

You've run a calibration round (or planned one) before scaling annotators.

You're tracking reject rate, not just throughput.

Now that the labels are good, we'll split them — and avoid the most common foot-gun in CV evaluation.