Frame the Problem

Turn a fuzzy product idea into a single sentence the model can answer.

Every computer vision project that ships starts the same way: someone says "the model should figure it out" and three weeks later nobody can explain what "it" was. Computer vision rewards specificity. The model only gets to answer the question you actually wrote down.

In this lesson, you'll write that question down. We'll turn a vague idea — like "detect unsafe behavior on the warehouse floor" — into a one-sentence task spec that is unambiguous to a model, an annotator, and a stakeholder. The output of that spec is what determines whether you reach for object detection, classification, or one of the other supported tasks.

Write a one-sentence task spec that names the input, output shape, and decision the model supports.

Write what the model sees, in one sentence.

Write what the model returns, in one sentence.

Write the human decision that uses the output.

If any of the three has more than one sentence, the scope is too big — split it.

Hands-on

The three sentences

A useful computer vision task spec answers three questions in plain English. Don't skip any of them.

- Input — What is the model looking at? A single image, a frame from a 30 fps camera, a video clip?

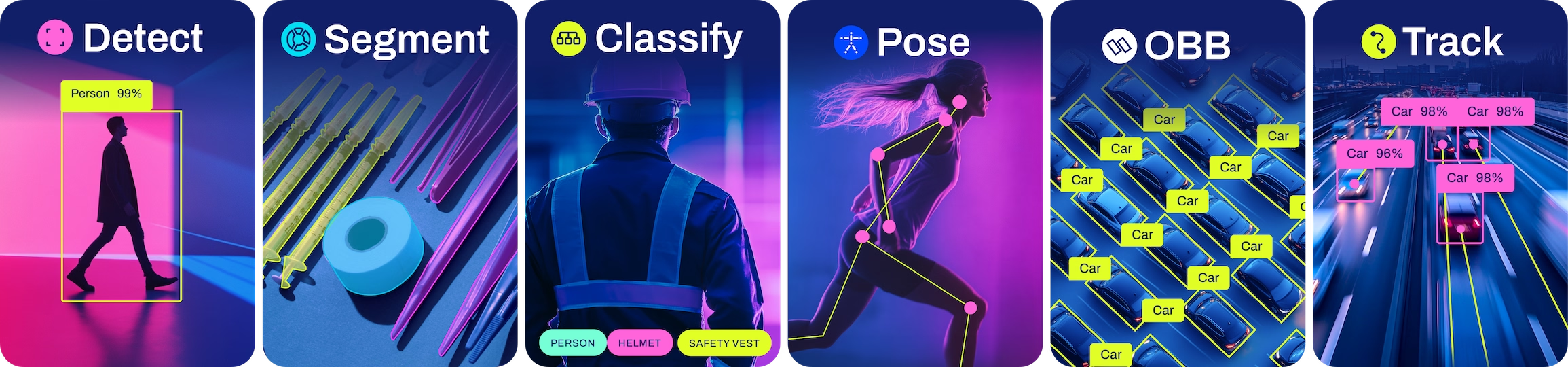

- Output — What does the model return? A class label per image, bounding boxes around objects, or pixel masks from instance segmentation?

- Decision — What changes in the world because of the output? Who acts, when, and based on what threshold?

Once you have the three sentences, the rest of the project — labels, data, metrics, model deployment — falls out almost automatically.

A worked example

Here's a vague request and a tight rewrite.

"We want to know when forklifts are doing something dangerous on the warehouse floor."

That sentence is a project. It is not a task. It conflates "knowing about forklifts" with "deciding what's dangerous." A model can do one of those well; the other is policy.

Tighter:

Input: A 1920×1080 frame from one of 12 ceiling-mounted cameras, sampled at 5 fps. Output: Bounding boxes for class

forkliftand classpersonwith a confidence score. Decision: A safety supervisor is paged when aforkliftbox and apersonbox overlap by more than 30% for at least 2 seconds.

The model only does steps one and two. The "is this dangerous" rule lives in code that consumes the output. Splitting it that way means you can ship a forklift detector, evaluate it independently, and change the danger rule without retraining. The same separation principle drives Ultralytics solutions like smart cities and AI in healthcare: a small set of vision primitives, plus policy on top.

Anything subjective — "unsafe", "high-quality", "VIP customer" — should live in code that consumes the model output, not in the model itself. Models are good at answering questions about pixels, not about company policy.

When the task is wrong

Three smells that you've written the wrong task:

- The output is a sentence. "The model returns a description of what's happening." That's a captioning model — much harder than detection. Are you sure?

- The output depends on the future. "The model predicts whether the forklift will hit someone." That's forecasting on top of detection. Build the detector first, then layer object tracking on it.

- The output is a yes/no for a complex scene. "Is this room safe?" One label can hide a hundred decisions. Localize first, judge later — the same logic improves manufacturing lines.

If your task has any of these smells, walk back to the simpler model that produces evidence, and let downstream code make the judgment.

Pick a project you're considering — yours, or the warehouse one above. Write the three sentences (input, output, decision) on one line each. If you can't, you don't have a task yet, you have an aspiration.

You can name the input format the model sees (image, frame, clip) and its size.

You can name the output type (class label, boxes, masks, keypoints) without reaching for the word "detect".

You've separated the model's job from the policy decision that uses its output.

Next we'll match your output sentence to one of five concrete vision tasks — and the labels each one demands.