Deploy a Model

From best.pt to a managed inference endpoint with one click.

Platform deployment is the same idea as Vercel for code: pick a region, click deploy, get a URL. Authentication, scaling, export-format optimization, and model-deployment observability come bundled. Your job is to point traffic at it and wire your client correctly.

Deploy a trained model as a managed endpoint and call it from your application.

Pick the run, pick a region, click Deploy.

Endpoint URL + API key.

Test with

curlor the SDK.Wire your application client; switch traffic when ready.

Hands-on

What you get

A Platform deployment is:

- An HTTPS endpoint URL (

https://api.platform.ultralytics.com/v1/predict/<deployment-id>). - An API key for auth.

- Auto-scaled compute behind it (the deployment scales to traffic).

- Built-in latency and accuracy dashboards.

- Versioned: each deployment is tied to a specific run + checkpoint.

Deploy

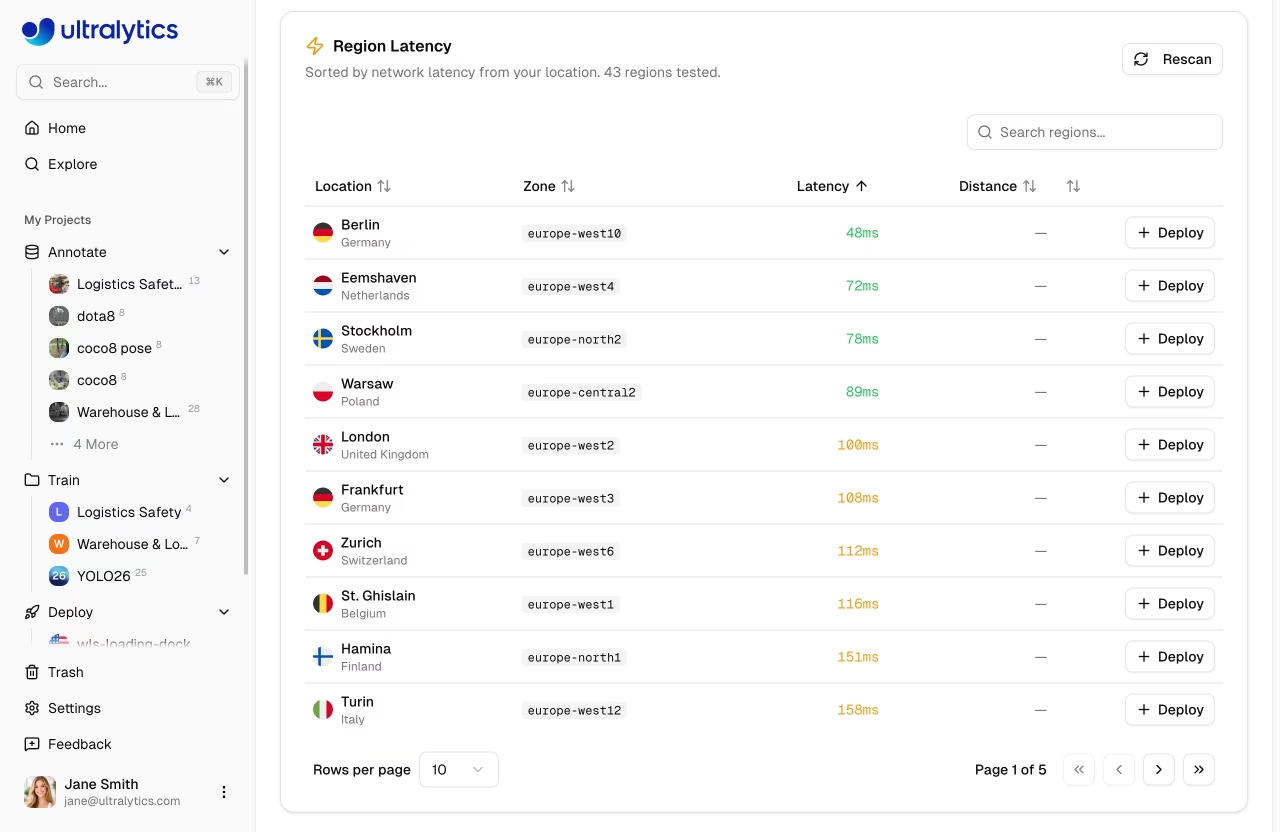

In the UI: Deploy → New Deployment. Pick:

| Setting | Notes |

|---|---|

| Source run | The training run with the model you want to deploy |

| Region | Place compute close to where calls come from |

| Hardware | CPU for low-volume / cheap; GPU for low-latency |

| Min/max replicas | Min ≥ 1 to avoid cold starts; max bounded by your budget |

| Auto-export format | Platform exports to the right format for the hardware automatically |

Click Deploy. After ~30 seconds you have an endpoint.

Calling the endpoint

curl -X POST "https://api.platform.ultralytics.com/v1/predict/<id>" \

-H "Authorization: Bearer $ULTRALYTICS_API_KEY" \

-F "image=@test.jpg"That returns JSON with detections:

{

"deployment": "forklift_v3",

"model_version": "v3_pallets_added",

"detections": [

{

"class": "forklift",

"class_id": 0,

"confidence": 0.92,

"box": [120, 234, 480, 700]

}

],

"inference_ms": 18,

"request_id": "req_abc123"

}from ultralytics_platform import Client

client = Client(api_key=os.environ["ULTRALYTICS_API_KEY"])

result = client.predict("forklift_v3", image="test.jpg")

for det in result.detections:

print(f"{det.class_name} {det.confidence:.2f}")Latency vs cost

Two knobs you'll touch most:

- Min replicas. Min = 0 is cheapest but gives 5–20 s cold inference-latency starts on first request. Min = 1 keeps a hot replica.

- Hardware tier. GPU vs CPU; smaller GPUs vs bigger. The throughput-vs-latency guide is a good companion when you need to choose between batched and per-request modes.

For real-time clients (browsers, IoT) — including edge AI deployments calling back into Platform — min ≥ 1 is mandatory. For batch jobs that tolerate cold starts, min = 0 is correct and saves a lot of money. The model deployment options and practices guides go deeper on the cost/latency tradeoffs.

The endpoint accepts requests with a valid Bearer token. Don't ship the token to the browser. Keep it on a server proxy that adds the header to incoming requests.

Versioned deploys and rollbacks

Each deployment is tied to a specific run. To deploy a new model:

- Train v4. Validate. Decide to ship.

- Click "Update deployment" → pick the v4 run.

- Platform rolls v4 in alongside v3, then switches traffic.

- If v4 misbehaves, click "Rollback" — instant return to v3.

This is the same gradual rollout pattern Vercel uses for code. Use it; never edit a live deployment by replacing files in place. If you'd rather self-host, Triton Inference Server is the canonical option for a YOLO-based gateway running in your own cloud.

Custom domain

Production usually wants detect.your-company.com instead of the platform-hosted URL. Add a CNAME to the deployment URL and Platform handles TLS.

Billing and quotas

Platform charges per inference (or per replica-hour for always-on deployments). Set quotas:

- Hard quota → endpoint returns 429 above the quota.

- Soft quota → endpoint warns; you can decide policy.

Quotas prevent a runaway client from blowing your budget.

Deploy your trained model as a Platform endpoint. Hit it with curl and the SDK. Note the cold-start latency on the first request and the warm latency on the second. Write down the difference — it's what your users will experience after a quiet period.

Your model is deployed at a Platform endpoint.

You've called it from

curland gotten a JSON response.You've decided on min replicas based on your latency tolerance.

We're live. Now we have to know if we're still right — that's monitoring.