Track Experiments

Compare runs, name them well, and keep the lessons.

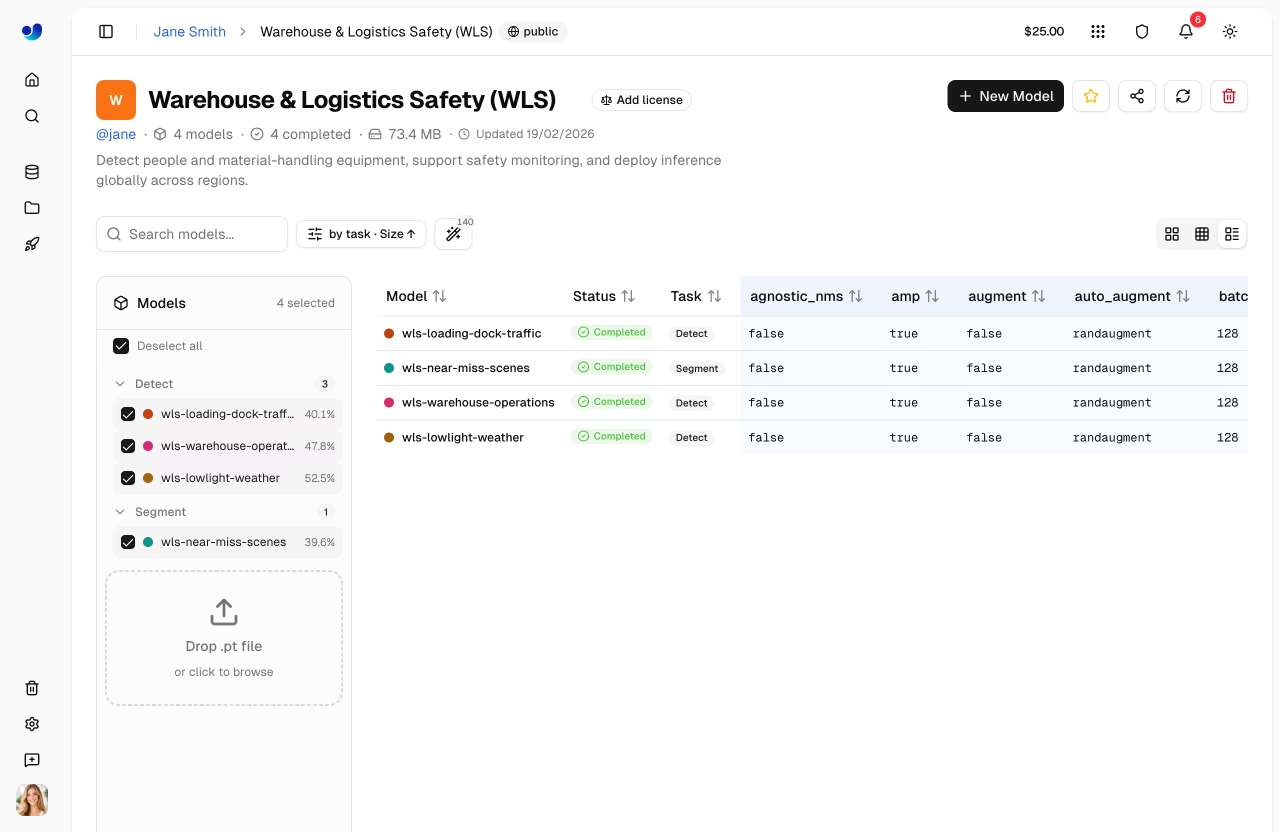

After a couple of weeks on a project, you'll have ten or twenty training runs. Half of them won the experiment but never got documented; the other half failed in instructive ways nobody captured. Experiment tracking — whether on Platform models, Comet, Weights & Biases, or MLflow — is the discipline of making the lessons stick, and of finding the best run when you actually have to ship.

Compare multiple Platform training runs, decide which to ship, and archive the lessons in a way your future self will find.

Name runs descriptively — what changed.

Pin the dataset version to every run.

Compare runs on the same metric — pick mAP@0.5:0.95 by default.

Document why each run was launched and what it taught you.

Hands-on

Comparing runs

Platform's experiment view lets you select multiple runs and overlay their metric curves:

mAP@0.5:0.95

▲

0.6│ ◇ v3_pallets_added (best)

│ ◇ ◇ ◇

0.5│ ◇ ◇ ◇

│ ◇ ◇ ◇

0.4│ ◇ ◇

│ ◇ ◇ v2_imgsz_1024

│

0.3│ ◇ v1 (baseline)

└────────────────────────▶ epochYou're looking for:

- Best final value — the simple "which is best" question.

- Convergence speed — a model that hits 0.55 at epoch 30 is more efficient than one that hits 0.55 at epoch 100.

- Stability — a curve with wild swings is harder to trust than a smoothly rising one.

Naming runs

Bad: run_5, final, final_actually_final.

Good: v3_pallets_added_imgsz_640, v4_lr_0.0005_no_mosaic.

The pattern: <version>_<what_changed>. Future-you, looking through 30 runs three months later, will thank present-you.

Pin every run to a dataset version

It's easy to launch a run on "the dataset I had on Tuesday" and have no idea later what that meant. Platform attaches the dataset version to every run — but only if you reference a tagged version, not the working state.

Always train against a tagged dataset version. Never against the live working dataset.

Pick a single comparison metric

For most CV projects, mAP@0.5:0.95 is the right comparison metric — it's strict enough to catch sloppy boxes and forgiving enough to be stable. The YOLO performance metrics guide walks through how it's computed; the confusion matrix view in Platform is the other half of any honest run review. Other useful metrics in specific contexts:

| Metric | When |

|---|---|

| mAP@0.5 | Quick check, large-object datasets |

| Per-class AP for class X | When one class drives business value |

| Recall @ precision = 0.95 | Safety / alarming systems |

| Inference latency on target | Production fitness |

Pick one before comparing — comparing on different metrics is where motivated reasoning sneaks in.

The decision template

When deciding whether a new run "wins":

- Did it improve the comparison metric by more than noise (~0.5 mAP points)?

- Does it pass a separate sanity check — at least 90% of v_previous's per-class APs?

- Has it been validated on the test set (not val)?

- Did latency stay within budget?

If all four, it wins. If only some, the answer is "more experiments" not "ship it."

Tune hyperparameters on val. Reserve the test set for the final go/no-go before deployment. If you compare ten runs on the test set, the test set is now training data and your number is dishonest.

Archive the lessons

Every meaningful run should produce a one-paragraph note: what you tried, what you saw, and what to try next. Platform has a notes field per run — use it.

v3_pallets_added:

Hypothesis: pallet AP was 0.31 because too few examples (7 in val).

Change: collected 80 more pallet images from camera-3 and camera-7,

retained existing labels, retrained from v2 best.pt with lr0=0.001.

Result: pallet AP 0.31 → 0.58. Overall mAP 0.51 → 0.59.

Next: forklift confidence still drops at night; collect dawn/dusk batches.These notes are the most valuable artifact of the project. Models go stale; lessons don't. If your team prefers an external tracker, the ClearML and TensorBoard integrations expose the same per-epoch metrics Platform stores natively.

Launch two runs that differ in one thing — image size, model size, or dataset version. Compare them in Platform's experiment view. Write the one-paragraph note for each. The discipline is the value, not the runs.

You've launched at least two Platform runs and compared them.

Each run has a descriptive name and a one-paragraph note.

You've decided which run to deploy based on a single, pre-chosen metric.

We've picked a winner. Next: turning that winner into a deployed endpoint.