From Prototype to Pipeline

The repeating shape of a CV team's quarter — and the artifacts that make it sustainable.

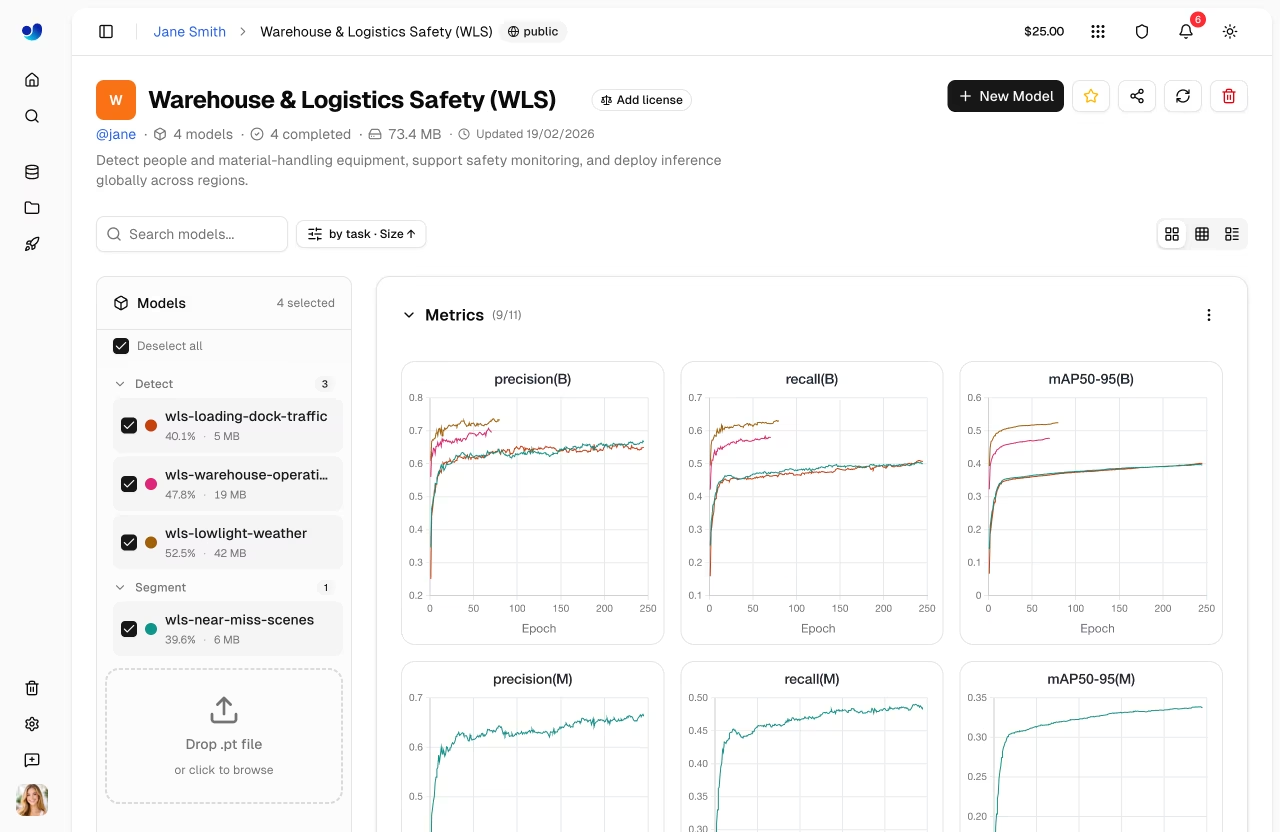

After the first deployment, the project enters a steady state. Every month-or-so cycle: drift hits, you collect data, retrain, validate, redeploy. Done well — and the Ultralytics customers page is full of teams running this loop quietly — this is calm and repeatable. Done badly, it's a panic on a dashboard. The difference is mostly in artifacts you set up before you're under pressure.

Set up the artifacts and rituals that make a CV project sustainable for a year — not just shipped once.

Holdout set, refreshed quarterly.

Drift dashboards with named owners.

Versioned datasets, versioned deployments, traceable through to runs.

A weekly review ritual.

Hands-on

The artifacts

Five things every long-running CV project should have. Platform makes most of them automatic — but they need someone explicitly responsible.

1. The holdout set

200–500 production-realistic labeled frames. Refresh quarterly. The single most reliable model monitoring accuracy signal.

2. The drift dashboard

Confidence histogram, object size, detection volume — three signals, plotted weekly. Owner: someone who looks at it.

3. The MLOps retraining runbook

A document, not a culture. When drift fires, what does the on-call do? Step by step. Half a page is plenty.

# Retraining runbook

## Trigger

- Holdout mAP drops by > 1.0 point for 3 consecutive weeks, OR

- Confidence-distribution KS p < 0.01 + per-class AP regression on holdout.

## Steps

1. Pull last 30 days of production frames into Platform Discovery.

2. Apply event-driven sampling at 1 fps with current best.pt as the trigger model.

3. Auto-annotate with current best.pt @ conf=0.5; route to review queue.

4. Review until drift-class edit rate stabilizes (~200 frames/class typical).

5. Promote new annotations into a new dataset version (v_(n+1)).

6. Train from v_n best.pt with lr0=0.001 for 50 epochs.

7. Validate against holdout AND drift-flagged frames.

8. Deploy with 10%/50%/100% rollout over 24 hours.

9. Update holdout with 50 newly labeled drift-frame examples.

## Owner

- ML lead is the DRI.

- On-call runs the playbook; ML lead reviews the v_(n+1) deployment before 100%.4. Versioned everything

Every deployment traces back to:

- A specific training run on the Platform train surface.

- A specific dataset version under Platform datasets.

- A specific config — the same arguments documented in train mode.

Platform does this automatically. The discipline is to use the trace — when accuracy drops, reach for the deployment log first, not the source code.

5. The weekly review

15 minutes, on a recurring calendar invite. Two questions:

- Anything red on the dashboards?

- Did anything change in the deployment, and if so, why?

Most weeks the answers are "no" and "no" and the meeting takes 4 minutes. The discipline is the calendar, not the meeting.

The shape of a quarter

A typical CV quarter on Platform looks like this:

Week 1 : Drift alert. Sample, label, retrain. Deploy v_(n+1).

Week 2-3: Quiet. Dashboards green.

Week 4 : Add a new class. Schema migration on dataset, retrain v_(n+2).

Week 5-7: Quiet.

Week 8 : Camera fleet expansion. New region, new dataRegion concerns. Migrate.

Week 9-11: Quiet.

Week 12 : Quarterly holdout refresh + review.Two retrains, one schema change, one infrastructure change, one review per quarter. That's the steady state of a healthy CV project.

Where projects get stuck

Patterns that mean a project is in trouble:

- No holdout set. "We can't tell if it's getting better." Build one immediately and revisit the model monitoring guide.

- No drift dashboard. "Production seems fine?" Seems is doing too much work — see the continual learning glossary entry for the loop you're missing.

- No naming convention. "Which checkpoint is in prod?" If you can't answer in 30 seconds, the deployment isn't traceable. The Platform quickstart shows the run-naming pattern that scales.

- Retraining is ad hoc. Every retrain is an event; the runbook prevents it from being a crisis. Fold hyperparameter tuning into the runbook so each iteration is also a controlled experiment.

- One person knows everything. Bus factor 1. Documentation isn't optional.

Each of these is fixable in a sprint. The project that has all five fixed by month 2 is the one still healthy in month 12. For self-hosted serving along the way, Triton Inference Server is the canonical handoff target.

You've finished the course

You now have:

- A trained, validated model.

- An exported, optimized runtime.

- Real-time tracking and pipelines.

- A multi-stream architecture.

- A managed Platform deployment.

- Monitoring and a retraining runbook.

That's a complete production CV system, end to end. The next time someone asks you to "ship a CV model," you have a checklist for the whole quarter — and the artifacts to keep it healthy.

Write your project's retraining runbook in the format above. Half a page. Include the trigger, the steps, and the owner. The exercise of writing it is half the value.

git add -A && git commit -m "docs(academy): completed Build with Ultralytics Platform"Your project has a holdout set, a drift dashboard, and a retraining runbook.

Each deployment is traceable to a run + dataset version.

You have a recurring weekly review on someone's calendar.

Course complete — take the final exam to earn the Build with Ultralytics Platform certificate.