Monitor in Production

Watch latency, detection volume, and drift — and know when to retrain.

A deployed model is a fixed object in a moving world. Cameras get repositioned, lighting changes seasonally, new product variants appear. Without model monitoring you'll find out about the data drift when a customer complains. With the right monitoring — see the model monitoring & maintenance guide — you'll spot it weeks earlier.

Use Platform's built-in monitoring to detect latency, accuracy, and drift regressions before they reach users.

Latency dashboard — alert on p95 above your budget.

Detection volume — alert on sudden drops.

Confidence distribution — KS test against baseline weekly.

Holdout mAP — weekly cron, alert on drops > 1.0.

Hands-on

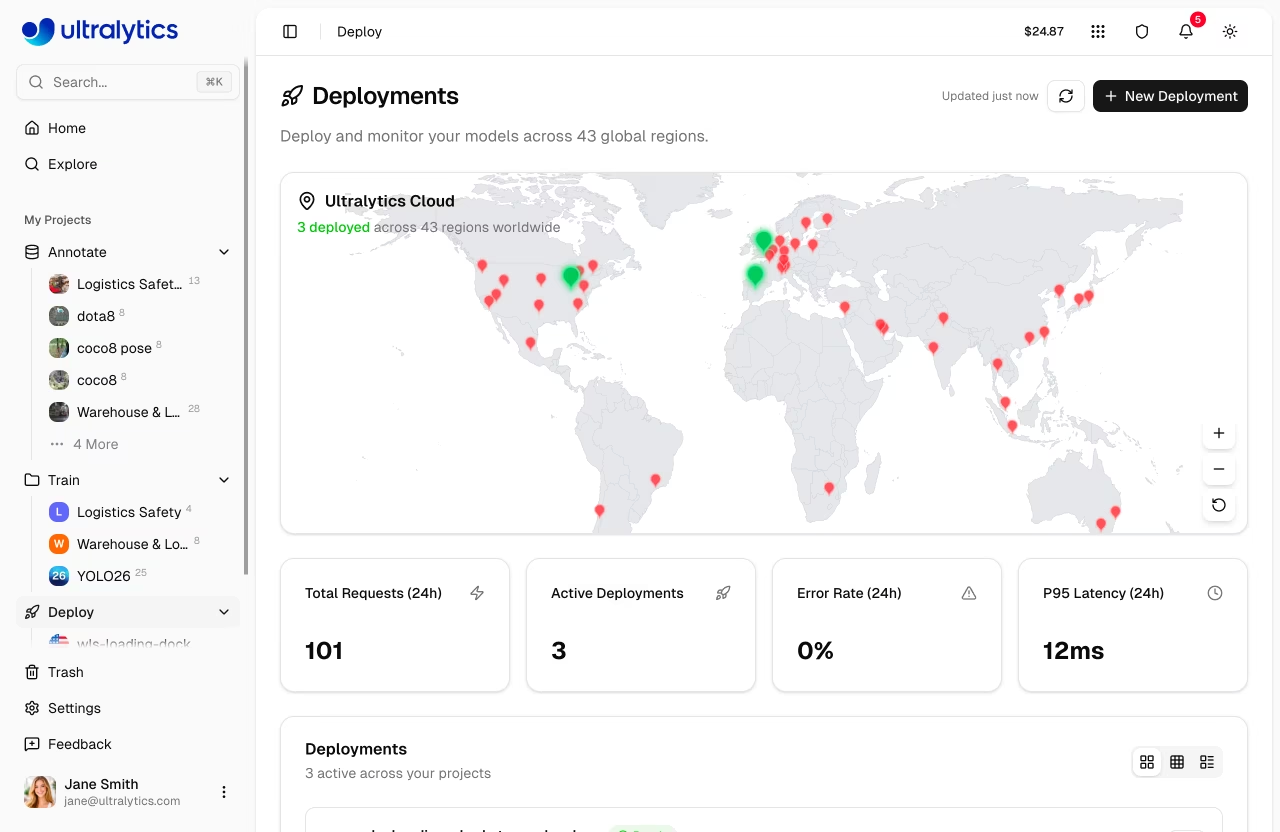

What Platform monitoring covers out of the box

Every deployment has a dashboard with:

| Metric | Cadence | What it catches |

|---|---|---|

| Request rate | per second | Traffic spikes / drops |

| p50 / p95 / p99 latency | per minute | Performance regressions |

| Error rate | per minute | Auth, payload, infra issues |

| Detection count per request | per request | Drift indicator |

| Mean confidence | per request | Drift indicator |

| Region distribution | per day | Where the traffic is coming from |

Alerts you should configure

Platform's alert system fires on metric thresholds. The alerts that actually matter:

| Alert | Threshold |

|---|---|

| p95 latency > budget | 5 minutes consecutive |

| Error rate > 1% | 5 minutes consecutive |

| Detection rate drops > 50% | 10 minutes consecutive |

| Mean confidence shifts > 2σ | Daily |

| Holdout mAP drops > 1 point | Weekly |

Each alert should map to a runbook entry — what does on-call do when this fires?

The holdout job

The single most important production check is the weekly holdout validation:

- You've reserved 200–500 production-realistic labeled images as a holdout (lesson 4).

- Platform schedules a weekly cron that runs validation against the current deployment.

- mAP@0.5:0.95 is logged and graphed over time — see the YOLO performance metrics guide for the math.

mAP@0.5:0.95 over time

0.62 │ ●●●●●●●●●●●●●

0.60 │ ●●●●●●●

0.58 │ ●● ← drift!

0.56 │ ●●●

└──────────────────────────────▶ weeksA clear downtrend over 3+ weeks is your early signal to investigate.

Drift signals

Two cheap drift signals you should plot weekly — both are also useful for upstream anomaly detection:

- Confidence histogram — production model confidences for the current week. Compare to the histogram from deployment week. If it shifts left, the input domain has drifted.

- Object size distribution — width × height of detected boxes. Camera repositioning, lens changes, or a new deployment site usually show up here first.

A KS test gives a p-value:

week 1 ▁▂▄█▇▄▁

week 12 ▁▃▆█▆▂▁ KS statistic 0.04, p = 0.31 ← stable

week 24 ▂▅█▇▃▁▁ KS statistic 0.18, p < 0.01 ← driftedWhen the p-value crosses your threshold (commonly 0.01), the dashboard turns yellow.

When to retrain

Drift signals don't always mean "retrain immediately." Common patterns:

| Pattern | Action |

|---|---|

| Confidence shift, mAP holding | Watch — could be benign domain shift the model handles |

| Confidence shift + mAP drop | Retrain. Real accuracy regression. |

| Detection volume drop, confidence stable | Investigate — might be a bug, a camera offline, or quieter scene |

| Latency regression | Hardware / infra investigation, not the model |

The retrain decision is also a data decision: what new data do you need? Sample drift-flagged frames into the labeling queue first.

A retraining loop in practice

Once you've done one retrain, codify it — this is the continual learning cycle in production form:

- Detect drift — alert fires.

- Sample drifted frames — pull recent production frames into Platform Discovery.

- Auto-annotate + review — using the previous best model as the inference pass, ranked by active learning uncertainty.

- Retrain — fresh run from previous best.pt with lr0=0.001 (lesson 8).

- Validate against holdout + recent frames — both must improve.

- Deploy with rollout — gradual traffic shift; monitor for regression.

- Lock the new baseline — update the holdout with newly labeled frames over time.

The whole loop, on Platform, takes hours of engineer time and days of training/validation. Without Platform — and without a coherent MLOps story — it would take a sprint of glue work.

Set up two alerts on your deployment: one on p95 latency, one on detection volume drop. Synthetically trigger them — send malformed images to spike errors, or pause traffic to drop detection volume. Confirm the alert fires.

Your deployment has at least two configured alerts.

You have a weekly holdout validation job running.

You can name your retraining trigger — what signal would cause you to retrain.

Last two lessons: regions/compliance, and the operational shape of a long-running CV project.