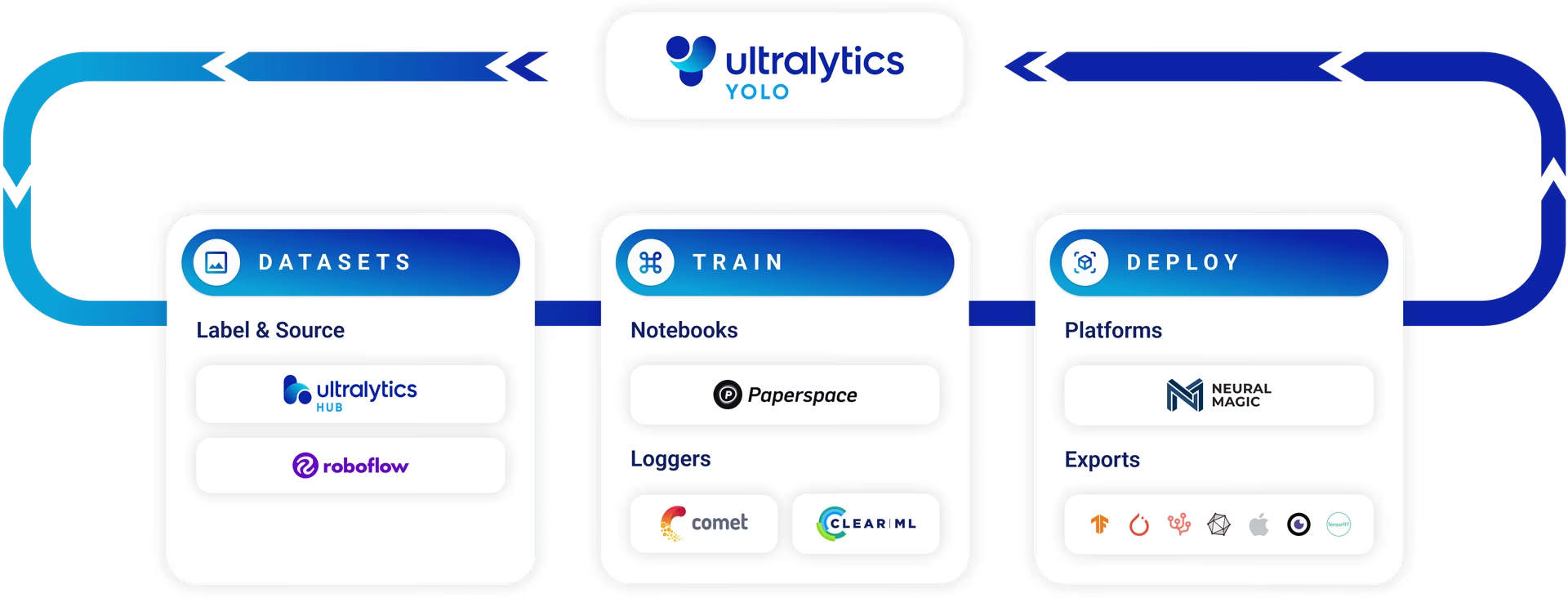

Export for Deployment

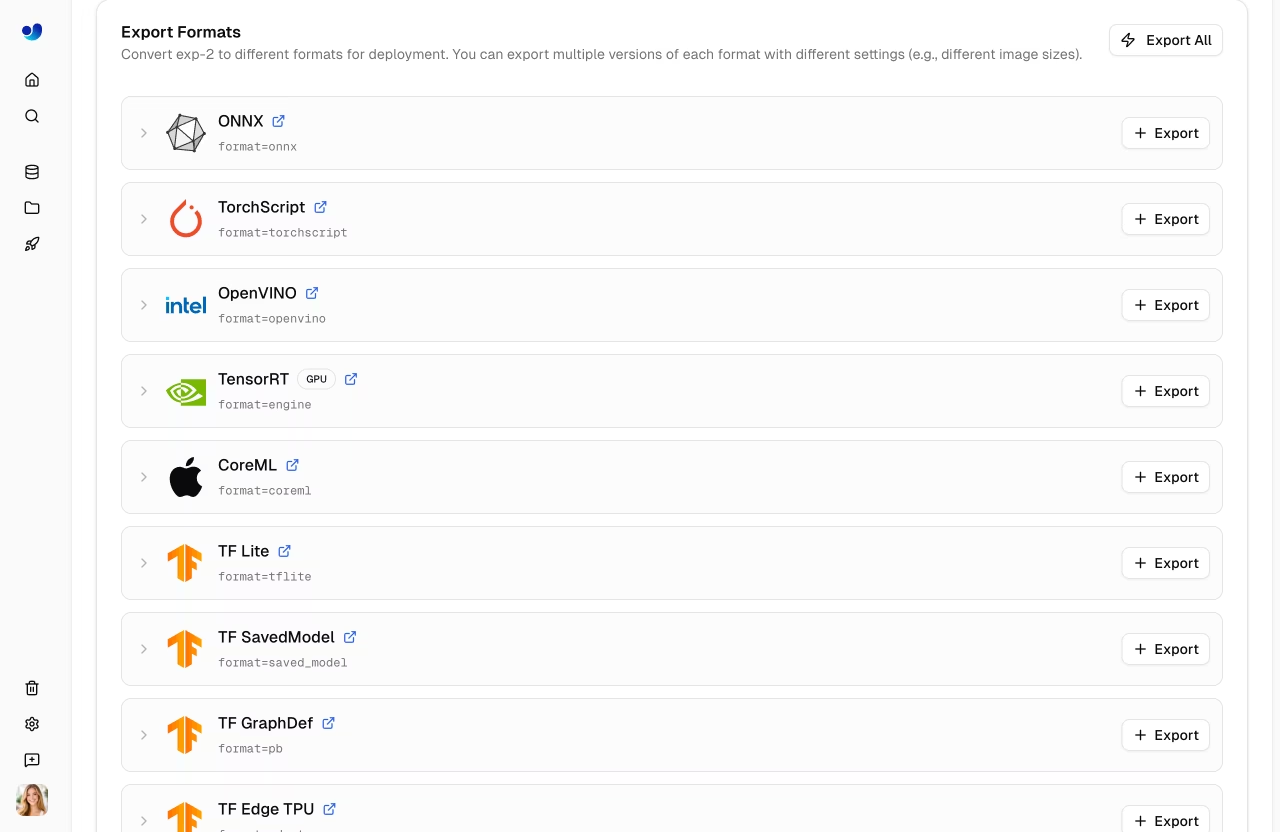

ONNX, TensorRT, CoreML, OpenVINO — pick the format your runtime actually wants.

Your trained best.pt is a PyTorch checkpoint. Production runtimes usually want something else: ONNX for cross-platform, TensorRT for NVIDIA edge AI, CoreML for iOS, OpenVINO for Intel CPUs. Export mode handles all of these in one line. The hard part is picking the right one.

Export your trained model to the right runtime format for your deployment target, and verify the export still produces correct detections.

model.export(format='onnx')— start here.TensorRT for NVIDIA Jetson / dGPU; CoreML for Apple; OpenVINO for Intel CPU; TFLite for Android.

Run a sanity check on the exported file before you ship.

Hands-on

What export actually does

model.export() traces your PyTorch model into a deployment format. Each format has its own runtime — you pick based on what your application is built on.

from ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/best.pt")

model.export(format="onnx") # cross-platform: onnxruntime

model.export(format="engine", half=True) # NVIDIA TensorRT, FP16

model.export(format="coreml") # Apple iOS / macOS

model.export(format="openvino") # Intel CPU / iGPU

model.export(format="tflite", int8=True) # Android / edge, INT8 quantizedExport mode writes to the same directory as your weights — best.onnx, best.engine, etc.

Pick by deployment target

| Target | Format | Notes |

|---|---|---|

| Cross-platform server (Linux + Win + Mac) | onnx | onnxruntime supports CPU and GPU; great default |

| NVIDIA Jetson, dGPU | engine (TensorRT) | Fastest on NVIDIA. Engine is hardware-specific — re-export per device |

| iPhone / iPad / Mac | coreml | Native, leverages Apple Neural Engine |

| Intel laptop / server CPU | openvino | Real CPU speedups via Intel kernels |

| Android | tflite | INT8 quantization recommended for mobile |

| Web browser | onnx (with onnxruntime-web) or tfjs | Smaller models work; n and s are realistic |

A .engine exported on an A100 will not run on a Jetson Orin. Export on the target device, or use TensorRT's cross-platform builder mode if you have to. ONNX is portable; engines are not.

Quantization: smaller and faster, sometimes worse

Model quantization shrinks weights from 32-bit floats to 16- or 8-bit integers. The model is smaller and faster; accuracy drops slightly. (Mixed precision FP16 is the safest first step.)

model.export(format="onnx", half=True) # FP16 — safe almost everywhere

model.export(format="tflite", int8=True) # INT8 — needs calibration dataINT8 export needs calibration data — a few hundred representative images that the export uses to choose quantization ranges. Without good calibration, INT8 can lose 5–10 mAP points; with good calibration, often less than 1.

Verify the exported model

Don't trust the export silently. Run inference with the exported file and compare to the original:

from ultralytics import YOLO

original = YOLO("runs/detect/forklift_v1/weights/best.pt")

exported = YOLO("runs/detect/forklift_v1/weights/best.onnx")

img = "https://ultralytics.com/images/bus.jpg"

print(len(original(img)[0].boxes), "detections from .pt")

print(len(exported(img)[0].boxes), "detections from .onnx")You should see the same count and roughly the same confidences. Off-by-one differences are normal (NMS at fp32 vs fp16); large differences mean the export went wrong.

Run validation on the exported model

The strongest verification: re-run yolo val against the exported file, then time it with yolo benchmark so you know its real fps before you ship:

yolo val model=runs/detect/forklift_v1/weights/best.onnx data=my_dataset/data.yamlmAP should be within 0.5 of the original. If it's substantially lower, something in the export is wrong — usually a calibration or precision issue.

You've shipped

You now have:

- A trained

best.pt. - Validation evidence it works.

- An exported file in the format your runtime wants.

- A sanity check that the exported file still works.

That is the full loop. Production CV projects do this loop monthly: more data, retrain, validate, export, deploy. The next course — Ultralytics YOLO in Production — picks up from this exported file and gets it into a real, observable, latency-tuned production system.

Export your trained model to ONNX. Run yolo val model=best.onnx data=... and compare mAP to the .pt version. The numbers should be within 0.5.

git add -A && git commit -m "feat(deploy): exported best.pt to onnx for production runtime"You've exported your trained model to the format your deployment target wants.

Validation on the exported model is within 0.5 mAP of the .pt version.

You can name why you picked that format over the alternatives.

Show solution

from ultralytics import YOLO

# Train

model = YOLO("yolo26n.pt")

model.train(data="my_dataset/data.yaml", epochs=100, imgsz=640, name="forklift_v1")

# Validate

metrics = model.val()

print(f"mAP@0.5:0.95 = {metrics.box.map:.3f}")

# Export

model.export(format="onnx", half=True, dynamic=True)Course complete — take the final quiz to earn your Train your first YOLO model certificate.