Resume and Iterate

Continue from where training stopped, fine-tune best.pt, and run experiments cheaply.

Real CV projects are a sequence of training runs, not a single epic one. Each round adds data — often guided by active learning — fixes a labeling bug, or tries a new image size. Knowing how to cheaply restart with fine-tuning instead of retraining from scratch is what makes that loop tight.

Resume an interrupted training run, and start a new run from your previous best.pt instead of from a pretrained model.

yolo train resume=True model=runs/detect/.../weights/last.pt— continues exact training.YOLO('runs/.../best.pt').train(data=…)— new run, but starts from your weights.Tag runs with descriptive names:

name=v2_added_pallets.

Hands-on

Resume an interrupted run

If a run was killed (power cut, disconnect, OOM at hour 8), resume training instead of starting over. Two options:

yolo train resume=True model=runs/detect/forklift_v1/weights/last.ptfrom ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/last.pt")

model.train(resume=True)Resume picks up the exact optimizer state, learning-rate schedule, and epoch counter. It will not reset the schedule — it continues from where it stopped.

Resume only makes sense if the config hasn't changed. Different epoch count, image size, or data → it's a new run that starts from a checkpoint, not a resume. Use resume=False and pass the checkpoint as the model.

Iterate from your best.pt

Most "next round" experiments aren't resumes — they're fresh runs that warm-start from your last good model. This is plain transfer learning inside your own project:

from ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/best.pt") # warm start

model.train(

data="my_dataset/data.yaml", # now with extra pallets

epochs=50, # shorter — already pretrained on your data

imgsz=640,

name="forklift_v2_more_pallets",

lr0=0.001, # lower LR — fine-tune, not retrain

)Lower learning rate is the key knob: you want to nudge the model, not reset it. lr0=0.001 (vs the default 0.01) is a sane fine-tune starting point — for anything more systematic, the hyperparameter tuning guide covers sweeps and the genetic optimizer. Augmentation knobs are summarized in the data augmentation glossary.

Naming runs

Let your future self thank you: name every run after what changed.

| Bad | Good |

|---|---|

train2 | v2_added_pallets |

exp_final | v3_imgsz1024_pallet_recall |

backup | v4_no_mosaic_aug |

model.train(name="v2_added_pallets", ...)A consistent naming scheme means you can compare results.csv across runs trivially. For richer experiment tracking, plug in the Comet or Weights & Biases integrations — both auto-log losses, metrics, and sample predictions per run.

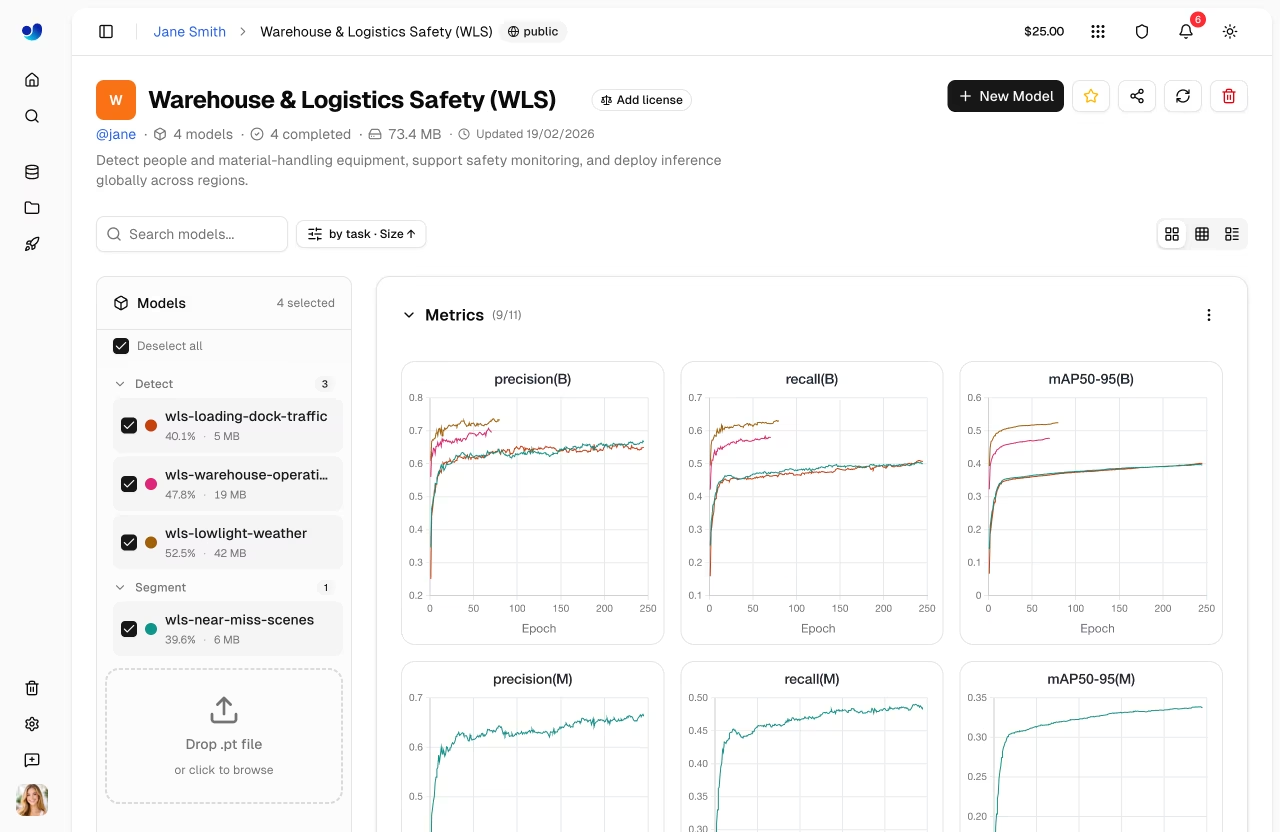

Compare runs side-by-side

Ultralytics YOLO writes a results.csv per run. A small helper to compare:

import pandas as pd

import glob

rows = []

for path in sorted(glob.glob("runs/detect/*/results.csv")):

df = pd.read_csv(path)

rows.append({

"run": path.split("/")[-2],

"epochs": len(df),

"best_mAP50_95": df["metrics/mAP50-95(B)"].max(),

"final_box_loss": df["train/box_loss"].iloc[-1],

})

print(pd.DataFrame(rows).to_string(index=False))When to start completely fresh

Sometimes the dataset has changed enough — added a class, relabeled half the data — that warming from your old best.pt actually hurts. The old weights know "this background is forklift territory" with old labels; new labels disagree. In that case start from the pretrained YOLO again:

model = YOLO("yolo26n.pt") # back to vanilla

model.train(data="my_dataset/data.yaml", epochs=100, name="v3_relabeled")Take your trained model, add 20 more labeled images to the training set, and run a 30-epoch fine-tune with lr0=0.001. Compare mAP between v1 and v2 — the change should be small but in the right direction.

You've resumed an interrupted run at least once.

You've started a fresh run from your

best.ptwith a lower learning rate.You've named your runs descriptively enough to compare them later.

Now let's apply the trained model to video — the format your real users probably care about.