Predict on an Image

Run a pretrained Ultralytics YOLO model and read the result object — boxes, confidences, classes.

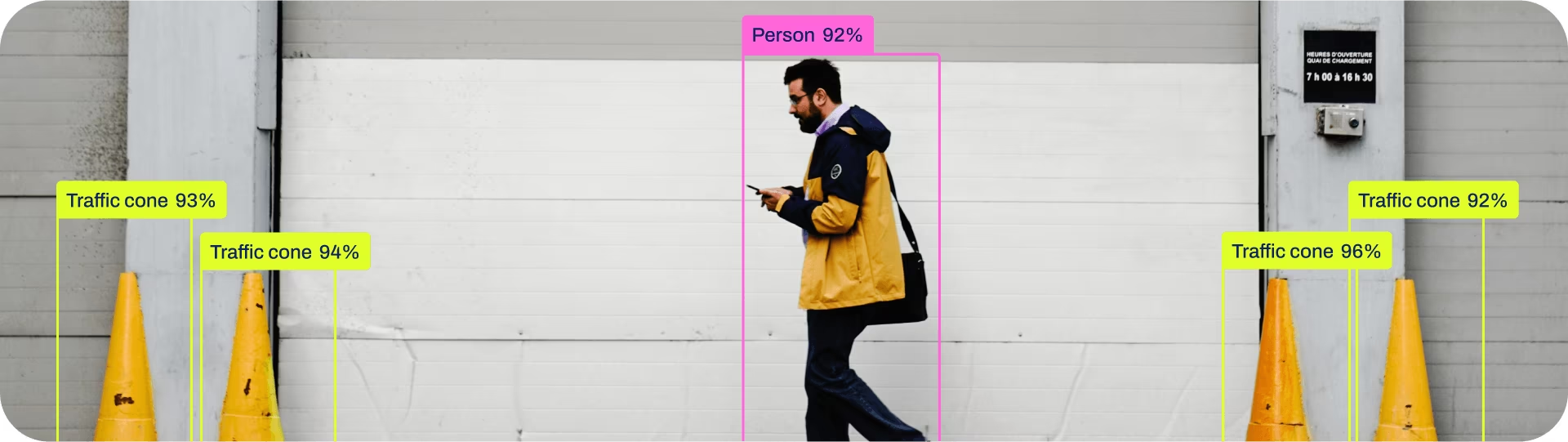

We installed Ultralytics YOLO; now let's actually use it. YOLO26 is the latest stable, recommended model family for new projects, and the pretrained yolo26n.pt detect model knows about 80 COCO classes. Even without any training of our own, that's enough to build something useful — and to get comfortable with Predict mode results before we go anywhere near training.

Run inference on an image in Python, iterate over result.boxes, and pull out class, confidence, and coordinates.

from ultralytics import YOLO.model = YOLO('yolo26n.pt').results = model('your_image.jpg').Iterate

results[0].boxes— each box has.cls,.conf,.xyxy.

Hands-on

The simplest possible script

Six lines:

from ultralytics import YOLO

model = YOLO("yolo26n.pt")

results = model("https://ultralytics.com/images/bus.jpg")

results[0].show() # opens a window with overlaid boxes

results[0].save("annotated.jpg")results is a list — one entry per image you pass in. Most of the time you'll be passing one image and reading results[0].

What's in a result

Each result object has several useful attributes — see the full surface in the Python usage guide:

| Attribute | What it holds |

|---|---|

boxes | Detection boxes (the typical detection output) |

masks | Segmentation masks (if you used a segment model) |

keypoints | Pose keypoints (if you used a pose model) |

names | Mapping from class index → class name |

orig_img | The original image as a NumPy array |

speed | Dict of per-stage timings in ms |

For object detection you're mostly working with bounding boxes:

for box in results[0].boxes:

cls_idx = int(box.cls)

cls_name = results[0].names[cls_idx]

confidence = float(box.conf)

x1, y1, x2, y2 = box.xyxy[0].tolist() # [4] tensor

print(f"{cls_name} ({confidence:.2f}): ({x1:.0f},{y1:.0f}) → ({x2:.0f},{y2:.0f})")Inference parameters that matter

The Predict mode defaults are good. Three knobs you'll touch most often:

| Argument | Default | What it does |

|---|---|---|

conf | 0.25 | Minimum confidence to keep a detection |

iou | 0.7 | NMS overlap threshold (IoU) for deduplicating boxes |

imgsz | 640 | Inference resolution (must be a multiple of 32 — usually 320, 640, 1280) |

results = model("warehouse.jpg", conf=0.4, iou=0.5, imgsz=1280)Pass classes=[0, 2, 3] to keep only specific class indices. You can find indices in model.names.

Batched inference is free

If you have many images, pass a list. YOLO batches them on the GPU automatically — much faster than calling model() once per image:

images = ["a.jpg", "b.jpg", "c.jpg"]

for result in model(images):

print(len(result.boxes), "detections in", result.path)You can also pass a directory or a glob ("folder/*.jpg").

Dealing with no detections

results[0].boxes is iterable even when empty. If len(results[0].boxes) == 0 the model didn't find anything above your confidence threshold. The fix is usually one of:

- Lower

conf— try 0.1 to see what's there. - Increase

imgsz— small objects need more pixels. - Wrong model for the task — pose model on a detection question, etc. (If your domain is narrower than COCO, you may also be looking at the wrong checkpoint — YOLO11 is the previous-generation default and still excellent.)

Run predict.py on three of your own images. For each, print the number of detections and the top-3 classes by confidence. If you get zero detections, drop conf to 0.1 and try again — the model may know about your objects but isn't sure.

You can run inference in Python and iterate

results[0].boxes.You know which knob to turn (

conf,iou,imgsz) for which problem.You can save annotated images programmatically.

Show solution

from ultralytics import YOLO

def predict(image_path: str, conf: float = 0.25):

model = YOLO("yolo26n.pt")

result = model(image_path, conf=conf)[0]

print(f"{image_path}: {len(result.boxes)} detections")

for box in result.boxes:

name = result.names[int(box.cls)]

c = float(box.conf)

print(f" {name:>10s} {c:.2f} {box.xyxy[0].tolist()}")

return result

if __name__ == "__main__":

predict("https://ultralytics.com/images/bus.jpg")We've predicted with the smallest model. Next: how to choose a model size — and the speed/accuracy tradeoff that decides it.