Cost and Latency Tuning

The handful of knobs that move the needle, and the ones that don't.

Once a system is in production, optimization becomes a search problem. The goal: lower cost (or inference latency) without losing more than X mAP. The wrong way is to flail through a hundred small changes. The right way is to know which knobs move the needle — quantization, pruning, knowledge distillation, batch size — and use them in order of leverage.

Pull the right lever first when latency or cost is over budget — and know when to stop.

Smaller model > smaller image size > FP16 > INT8 > batch tuning.

Drop image size before model size when small objects aren't the bottleneck.

Batch fewer streams at higher fps, not more streams at lower fps.

Stop optimizing when mAP loss exceeds your budget — go fix the data instead.

Hands-on

The lever leaderboard

Order from highest leverage to lowest. Try in order, stop when you meet the budget. The broader deployment practices guide is useful once this becomes an operating loop.

| Lever | Typical speedup | Typical mAP cost |

|---|---|---|

| Smaller model (m → s, s → n) | 2–4× | 3–8 points |

| Smaller imgsz (640 → 480) | 2× | 1–3 points |

| FP16 export | 1.5–2× | < 0.5 points |

| INT8 export with calibration | 2–4× | 0.5–2 points |

| Larger batch (server) | 1.3–2× | 0 |

| Frame skipping with tracker interpolation | 2–5× | small if tracker is good |

Knob-by-knob

Smaller model

The biggest single change. yolo26n is roughly 2× faster than yolo26s, which is roughly 2× faster than yolo26m. If you can tolerate the mAP drop, this beats every other lever combined.

When this works: narrow tasks where the model has lots of headroom. A 3-class warehouse detector might lose 1–2 mAP from m → n; a 80-class general detector might lose 5+.

Smaller image size

imgsz=640 is the YOLO default. imgsz=480 is ~2× faster (for fully-convolutional models, scaling is roughly quadratic). The hit comes mostly on small objects.

When this works: scenes where targets fill at least 5% of the image. When it doesn't: small / distant objects (drones, satellite, retail products on a shelf far from camera).

Half precision

FP16 (mixed precision) is almost free on modern GPUs. Always try it before larger optimizations. The accuracy hit is sub-0.5 mAP on most tasks — within noise.

INT8 quantization

The next step after FP16 if you need more speed. Calibration is critical — see lesson 3. INT8 buys you another 1.5–2× over FP16 with proper calibration; without it, mAP can crater.

Batch size

On the server, larger batches usually win throughput at the cost of per-frame latency. Sweep batch=1, 2, 4, 8, 16 on your hardware and look at throughput per dollar — sometimes a larger batch is cheaper but feels slower per-frame.

Frame skipping

If your detection rate is bounded by inference and your scene moves slowly, run detection every N frames and let the tracker interpolate boxes between detections. Every N=2 doubles throughput; for slow scenes the tracker fills in beautifully.

N = 2

for i, r in enumerate(model.track("input.mp4", stream=True, persist=True)):

if i % N != 0:

continue

# process detectionsWhen to stop

A common failure: spend two weeks optimizing the model, save 10 ms, and ship a system that's still slow because the data pipeline is the bottleneck. Use Benchmark mode on the target hardware so you're tuning real numbers, not vibes.

Profile the system end-to-end before optimizing the model. Common surprises:

- 60 ms decoding RTSP, 5 ms model inference. Optimize the decode.

- 30 ms model, 100 ms postprocessing in Python. Move postprocessing to a worker / NumPy.

- 5 ms model, 200 ms writing to a slow database. Batch the writes.

The model is rarely the bottleneck after FP16. Optimize what's actually slow.

When you've exhausted all model levers, the next lever is data, not architecture. A dataset that better matches production lets you drop a model size and keep the same mAP — a true Pareto improvement. This is where most teams should be spending optimization budget.

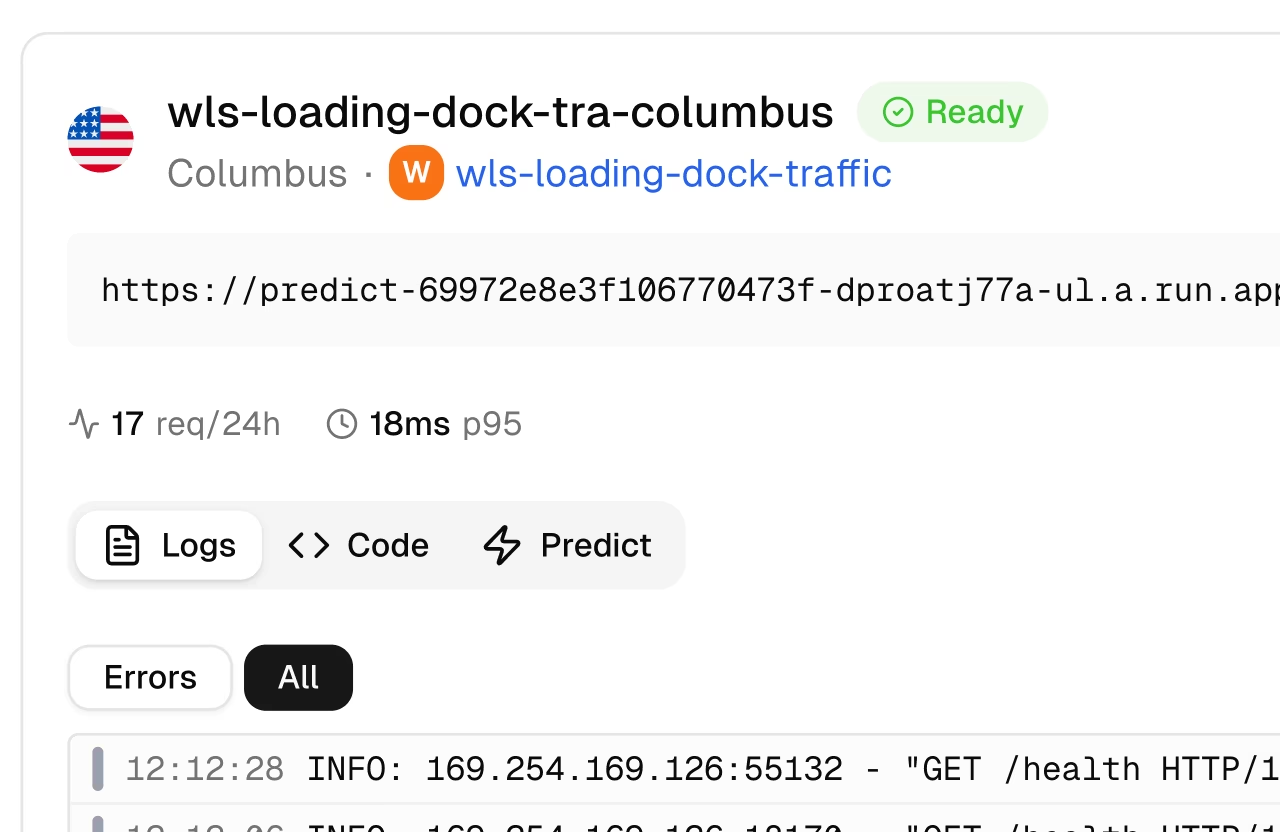

Cost levers in the cloud

Cost = utilization × instance hour. To cut cost:

- Higher utilization. A GPU at 30% utilization is 70% wasted. Multi-stream batching — and serving via Triton Inference Server — pushes utilization up.

- Smaller instances. A T4 might be enough for what you're running on an A100. Benchmark and downsize. For pure-CPU fleets, OpenVINO or Edge AI deployments can drop you off cloud GPU entirely.

- Spot / preemptible. For batch processing (not realtime), spot instances are 60–90% cheaper.

- Right region. GPU prices vary 2× across regions. Move the processing close to the cameras anyway, but check prices.

The optimization budget

Set a budget before you start: "I'll spend 2 days on optimization, accept up to 1 mAP loss, and ship if I hit X latency at Y cost." Without a budget, optimization expands to fill the available time.

Pick one lever from the leaderboard. Apply it. Re-run validation and benchmarks. Compare against your previous numbers. The only winning move is to take measurements before and after.

git add -A && git commit -m "perf(production): tuned model + runtime for target latency budget"You can name the lever order and what each one costs in mAP.

You've measured your bottleneck — model, decode, or postprocess — end-to-end.

You've set an optimization budget and stopped at it.

Course complete. The next course is Build with Ultralytics Platform, which puts everything you've done into a managed end-to-end system.