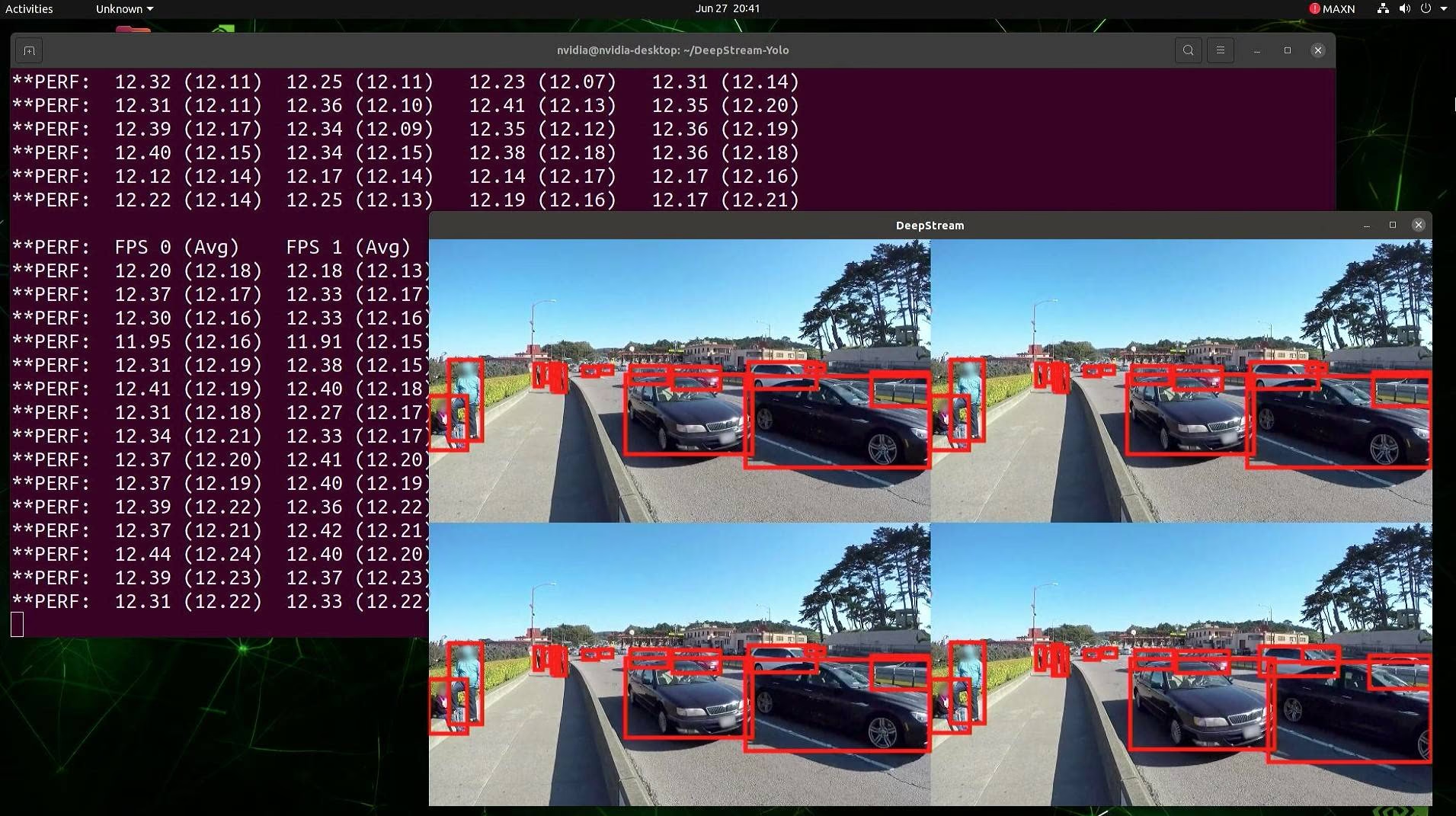

Multi-Stream Inference

Run multiple cameras concurrently without dropping frames.

Production computer vision is rarely one camera. It's 4, or 16, or 100. Naïvely calling model(stream) per camera in a Python loop maxes a single core and falls behind. The right architecture batches across cameras (a batch size tuning problem), decodes in parallel, and keeps the GPU saturated for real-time inference.

Run 4 or more concurrent video streams through one model and keep up with all of them.

Decode in worker threads/processes — never inline.

Batch frames from multiple cameras into one model call.

One Ultralytics YOLO instance, many sources, single GPU.

Profile until the bottleneck is the GPU, not the decode or the IO.

Hands-on

Where the time goes

Per-frame work in a multi-stream system:

- Decode — RTSP / file → numpy array. ~5–20 ms per frame on CPU.

- Preprocess — resize, letterbox, normalize. ~1–3 ms.

- Inference — model forward + NMS. ~5–30 ms depending on model and GPU.

- Postprocess + downstream — tracking, counting, sinks. ~1–5 ms.

If you serially do (1)→(2)→(3)→(4) per frame per camera in one thread, the GPU sits idle most of the time waiting for decode. The fix is concurrency; the thread-safe inference guide covers the failure modes to avoid.

Architecture: producers + batched consumer

┌────────┐ ┌────────┐ ┌────────┐ ┌────────┐

│cam 1 dec│ │cam 2 dec│ │cam 3 dec│ │cam 4 dec│ ← thread per camera

└────┬───┘ └────┬───┘ └────┬───┘ └────┬───┘

▼ ▼ ▼ ▼

┌──────────────────────────────────┐

│ frame queue (bounded) │

└────────────┬─────────────────────┘

▼

┌──────────────────────────────────┐

│ model.predict(batch) │ ← single GPU consumer

└────────────┬─────────────────────┘

▼

┌──────────────────────────────────┐

│ per-stream postprocess + sinks │

└──────────────────────────────────┘Built-in batched streams

Ultralytics has a built-in shortcut: pass a list of sources and stream=True to Predict mode.

from ultralytics import YOLO

model = YOLO("yolo26n.engine") # use the optimized engine

sources = [

"rtsp://camera-1.local/stream",

"rtsp://camera-2.local/stream",

"rtsp://camera-3.local/stream",

"rtsp://camera-4.local/stream",

]

for results in model.track(sources, stream=True, persist=True):

# `results` is a list — one per source

for stream_idx, r in enumerate(results):

for box in r.boxes:

# process box; box.id is per-stream-and-class unique

passUnder the hood Ultralytics opens a thread per source, decodes in parallel, and batches frames into one model call when they arrive close in time. Pair this with a TensorRT or OpenVINO engine for the best per-stream throughput.

When you need a custom architecture

The built-in is great up to ~16 streams. Above that, or when you have non-trivial postprocessing (DB writes, network sinks), a custom architecture wins:

- Decode in subprocesses for true parallelism (Python GIL doesn't matter for native decode but does for any Python work in the loop).

- Use a frame queue with a max size. If decode is faster than inference, drop old frames rather than blow memory.

- Pin the inference thread to one GPU and pre-load the engine.

import queue

import threading

import cv2

import numpy as np

from ultralytics import YOLO

frame_queue: queue.Queue = queue.Queue(maxsize=64)

stop = threading.Event()

def decoder(source, stream_id):

cap = cv2.VideoCapture(source)

while not stop.is_set():

ok, frame = cap.read()

if not ok:

break

try:

frame_queue.put((stream_id, frame), timeout=0.1)

except queue.Full:

pass # drop frame; consumer is behind

def consumer():

model = YOLO("yolo26n.engine")

while not stop.is_set():

batch = []

ids = []

for _ in range(8):

try:

sid, f = frame_queue.get(timeout=0.05)

except queue.Empty:

break

ids.append(sid)

batch.append(f)

if not batch:

continue

results = model(batch, verbose=False)

for sid, r in zip(ids, results):

# postprocess per stream

pass

threads = [threading.Thread(target=decoder, args=(src, i), daemon=True) for i, src in enumerate(sources)]

for t in threads: t.start()

threading.Thread(target=consumer, daemon=True).start()If your inference can't keep up, queueing every frame just delays your latency permanently. Set a max queue size, drop oldest, and measure the drop rate. Real-time CV systems should drop frames during overload, not lag.

Capacity planning

Rough rule of thumb on a single A10/T4 GPU at 640×640:

yolo26nFP16 engine: ~50–80 streams at 5–10 fps.yolo26sFP16 engine: ~25–40 streams at 5–10 fps.yolo26mFP16 engine: ~12–20 streams.

For higher fps, divide. For higher resolution (1280×1280), divide by 4. Always benchmark on the real hardware before sizing the fleet. For larger fleets, Triton Inference Server and DeepStream on Jetson are the natural production homes.

Run 4 video files (or 4 RTSP cams) through model.track(sources, stream=True). Measure end-to-end fps per stream. Try doubling to 8 sources. Note where it stops keeping up.

You can run 4+ sources through a single model and read per-stream results.

You've measured your effective per-stream fps on real hardware.

Your architecture drops frames cleanly under overload instead of growing memory.

We can run at scale. Now: monitoring it well enough to notice when something goes wrong.