OpenVINO on CPU

Real CPU speedups via Intel's optimized kernels — useful when GPUs aren't an option.

Lots of production computer vision runs on CPU — VMs without GPUs, embedded x86 boxes, dev laptops. ONNX Runtime is fine; OpenVINO is usually noticeably faster on Intel CPUs because it uses kernels tuned for the exact instruction set (AVX-512, AMX) on the chip.

Export to OpenVINO and confirm a speedup over plain PyTorch CPU inference.

model.export(format='openvino').yolo benchmarkto compare against PyTorch CPU.FP16 (

half=True) usually free on modern Intel CPUs with AVX-512.

Hands-on

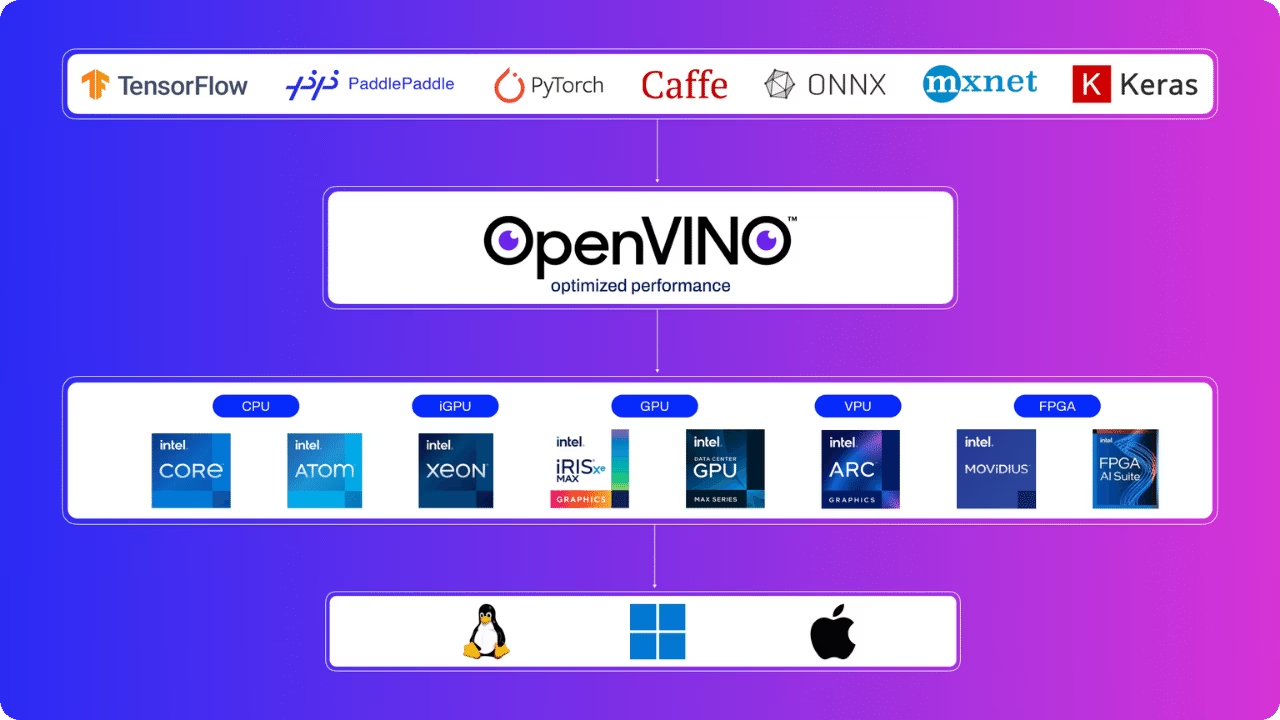

Why OpenVINO over ONNX Runtime on Intel

Both work. OpenVINO consistently wins on Intel CPUs because:

- Kernels target specific instruction sets (AVX2, AVX-512, AMX on newer Xeons).

- Layer fusion patterns are tuned for Intel's pipeline depth and cache.

- INT8 quantization on CPU is mature and well-supported.

On AMD or non-x86 CPUs, ONNX Runtime is usually equal or better. The latency vs throughput modes guide is required reading once you start tuning.

Export

from ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/best.pt")

model.export(

format="openvino",

half=True, # FP16

imgsz=640,

int8=False, # see below for INT8

)That writes a directory best_openvino_model/ with best.xml (the graph) and best.bin (the weights).

Benchmark side by side

Benchmark mode is the cleanest way to compare runtimes:

yolo benchmark model=runs/detect/forklift_v1/weights/best.pt imgsz=640That sweeps several formats on the current machine. On a typical Intel laptop you'll see something like:

| Format | mAP@0.5 | mAP@0.5:0.95 | Inference (ms) |

|---|---|---|---|

| PyTorch | 0.81 | 0.58 | 65 |

| ONNX | 0.81 | 0.58 | 48 |

| OpenVINO FP32 | 0.81 | 0.58 | 24 |

| OpenVINO FP16 | 0.81 | 0.58 | 18 |

| OpenVINO INT8 | 0.79 | 0.55 | 12 |

Numbers will vary by CPU and image; the ratios are what to expect.

INT8 on CPU

INT8 is more attractive on CPU than on GPU, because CPUs lack the FP16 / mixed-precision hardware that GPUs have. The same calibration story applies — calibrate with production-like data:

model.export(

format="openvino",

int8=True,

data="my_dataset/data.yaml",

)By default OpenVINO picks thread counts dynamically. For a server running multiple model instances (one per camera stream), pin thread counts per instance with OMP_NUM_THREADS or OpenVINO's runtime configuration — see the thread-safe inference guide. Otherwise the kernels stomp on each other and total throughput drops.

When to skip OpenVINO

Skip if:

- Your CPU isn't Intel.

- You only have a few cameras and PyTorch is already fast enough.

- Cross-platform builds matter more than peak speed (then ONNX wins).

For most other Intel-CPU deployments, OpenVINO is a 2–3× free lunch — and a meaningful drop in inference latency.

Export your model to OpenVINO and run yolo benchmark to compare against PyTorch and ONNX on the same machine. Note the speedup multiplier.

OpenVINO export builds without errors on your machine.

Validation mAP is within 0.5 of the source.

You've documented the speedup over PyTorch CPU on your hardware.

Detections in single frames are the building block. Next: tracking — turning detections into persistent objects.