Export to ONNX (and Verify Parity)

The portable starting point — and how to confirm the export didn't quietly break the model.

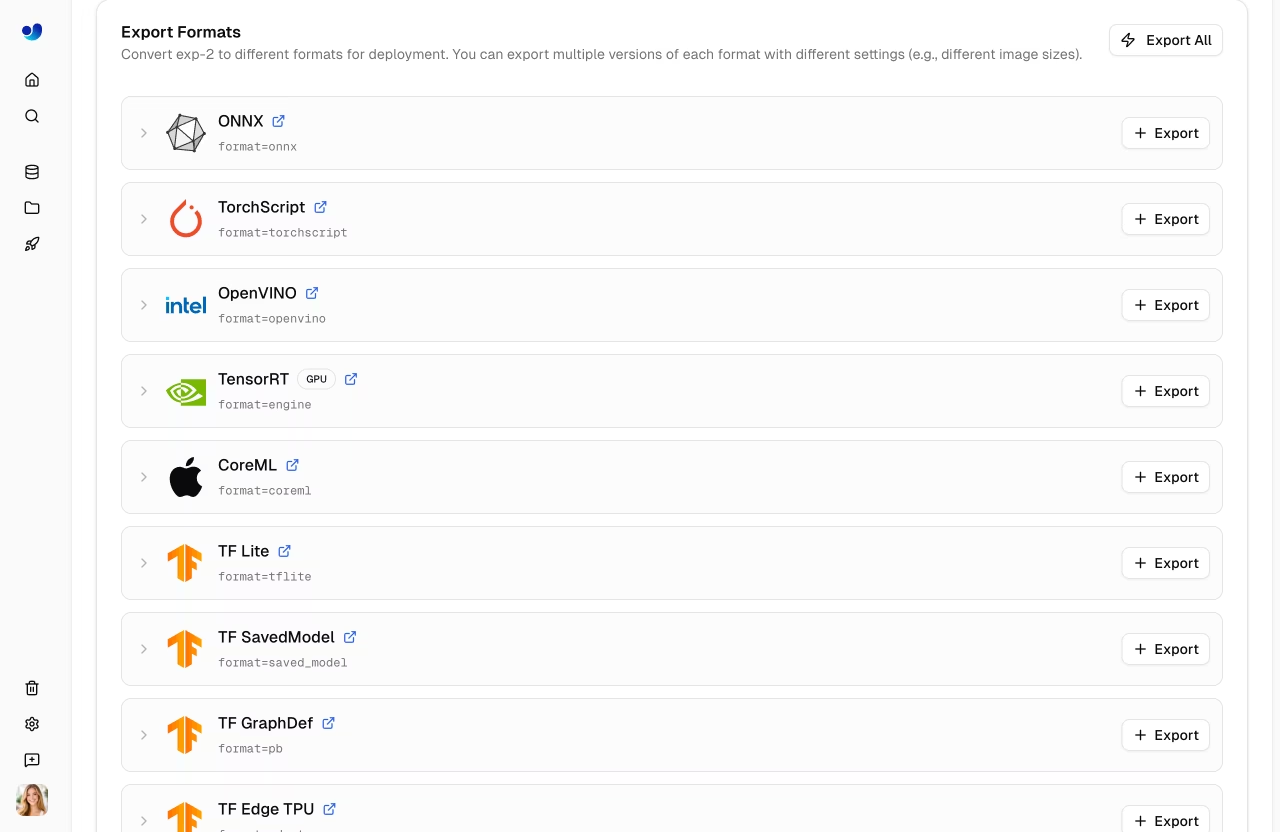

ONNX is the lingua franca of model deployment. Even if you eventually move to TensorRT or CoreML, ONNX export is usually the cleanest first step — most other formats are derived from it. The non-trivial part isn't exporting; it's verifying that the exported model still gives the same answers.

Export to ONNX with appropriate options, run the same inference through the .pt and .onnx files, and confirm parity within tolerance.

model.export(format='onnx', dynamic=True, simplify=True).Re-run a known image through both

.ptand.onnx. Counts and confidences should match closely.Run

yolo valon the.onnxfile. mAP should be within 0.5 of the.pt.If parity fails, check opset, dynamic shapes, and NMS settings.

Hands-on

Export

from ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/best.pt")

model.export(

format="onnx",

dynamic=True, # variable batch + image size at inference time

simplify=True, # run onnx-simplifier on the graph

opset=17, # broadly supported by onnxruntime

half=False, # keep FP32 for the parity check; switch to FP16 later

)That writes runs/detect/forklift_v1/weights/best.onnx. The output has end-to-end NMS baked in by default — meaning the exported file does the full pipeline, not just the backbone.

What each option does

| Option | Default | When to change |

|---|---|---|

dynamic | False | Set True if batch size or image size varies at inference |

simplify | True | Leave on; onnx-simplifier removes redundant nodes |

opset | latest stable | Lower (12–15) only for older runtimes that don't support newer ops |

half | False | True for FP16 — faster, slight accuracy drop (mixed precision) |

int8 | False | INT8 quantization — see lesson 3 |

nms | True | False only if you want to do NMS yourself in your runtime |

Run the parity check

Don't trust the export. Run a known image through both:

from ultralytics import YOLO

pt = YOLO("runs/detect/forklift_v1/weights/best.pt")

onnx = YOLO("runs/detect/forklift_v1/weights/best.onnx")

img = "test_image.jpg"

pt_result = pt(img, conf=0.25)[0]

onnx_result = onnx(img, conf=0.25)[0]

print(f"PT : {len(pt_result.boxes)} detections")

print(f"ONNX: {len(onnx_result.boxes)} detections")

# Compare top-5 confidences

pt_conf = sorted([float(b.conf) for b in pt_result.boxes], reverse=True)[:5]

onnx_conf = sorted([float(b.conf) for b in onnx_result.boxes], reverse=True)[:5]

print(f"Top-5 PT : {pt_conf}")

print(f"Top-5 ONNX: {onnx_conf}")You should see:

- The same detection count (or off by ±1 from NMS rounding).

- Confidences within ~0.01 of each other.

- Same class predictions in roughly the same boxes.

Run validation on the ONNX file

The strongest parity check is full validation, optionally swept across formats with Benchmark mode:

yolo val model=runs/detect/forklift_v1/weights/best.onnx data=my_dataset/data.yamlmAP@0.5:0.95 should be within 0.5 points of the PyTorch model. More than that and something is wrong.

- Wrong opset. If your runtime doesn't support a newer op, export silently uses a less precise replacement. Set

opsetto the runtime's max supported version. - Dynamic shape disagreement. If you exported with

dynamic=Falseand the runtime feeds different sizes, NMS calibration is off. Re-export withdynamic=True. - NMS settings drift.

confandiouare baked into the export whennms=True. Make sure the values match what the runtime will use.

Run with onnxruntime directly

Sometimes you want to skip the Ultralytics wrapper and call the inference engine yourself:

import onnxruntime as ort

import numpy as np

from PIL import Image

session = ort.InferenceSession(

"best.onnx",

providers=["CUDAExecutionProvider", "CPUExecutionProvider"],

)

img = Image.open("test.jpg").resize((640, 640))

arr = np.array(img).transpose(2, 0, 1).astype(np.float32) / 255.0

arr = arr[None] # NCHW

outputs = session.run(None, {"images": arr})

print([o.shape for o in outputs])This is what your production runtime will actually do — the Ultralytics wrapper is for convenience, not the fast path.

Export your model to ONNX. Run yolo val on both the .pt and the .onnx. Confirm mAP is within 0.5. If it's not, change opset to 13 and re-export.

You have a

best.onnxnext to yourbest.pt.Detection counts and confidences match within tolerance.

Validation mAP on the ONNX is within 0.5 of the PyTorch model.

Show solution

from ultralytics import YOLO

ckpt = "runs/detect/forklift_v1/weights/best.pt"

model = YOLO(ckpt)

model.export(format="onnx", dynamic=True, simplify=True, opset=17)

onnx_path = ckpt.replace(".pt", ".onnx")

metrics_pt = YOLO(ckpt).val()

metrics_onnx = YOLO(onnx_path).val()

print(f"PT mAP@0.5:0.95 = {metrics_pt.box.map:.3f}")

print(f"ONNX mAP@0.5:0.95 = {metrics_onnx.box.map:.3f}")

print(f"Δ = {abs(metrics_pt.box.map - metrics_onnx.box.map):.3f}")Now we'll squeeze the ONNX into TensorRT and watch latency drop in half.