Observability and Drift

What to log, what to dashboard, and how to spot accuracy regression before users do.

A computer vision system in production has three failure modes that traditional monitoring misses: silent accuracy drops, distribution drift, and per-class regressions. The fix is to instrument the model the same way you instrument an HTTP service — with explicit, alertable signals — and to put model monitoring on the same footing as the rest of your MLOps discipline.

Define and emit the metrics that catch accuracy and drift regressions, and set thresholds that page on real problems.

Per-frame: latency, queue depth, decode failures.

Per-stream: detection volume, mean confidence, mean object size.

Per-deployment: holdout set mAP, weekly.

Distribution drift: KS-test on confidence histograms, mean object area.

Hands-on

The three layers of observability

| Layer | Question | Examples |

|---|---|---|

| Infrastructure | "Is the service up?" | CPU, GPU, memory, throughput, dropped frames |

| Model behavior | "Is the model doing what it did yesterday?" | Detection volume, mean confidence, class distribution |

| Model accuracy | "Is the model still right?" | Holdout mAP (performance metrics), sampled human labels, alarm rates |

Most teams cover the first layer with off-the-shelf tools, then forget the other two. The other two are where production CV breaks silently; model monitoring is the discipline that keeps them visible.

Metrics worth emitting per frame

import time

import logging

log = logging.getLogger("yolo.inference")

for r in model.track("rtsp://camera-1.local/stream", stream=True, persist=True):

inf_ms = r.speed["inference"]

n_boxes = len(r.boxes)

mean_conf = float(r.boxes.conf.mean()) if n_boxes else None

log.info(

"inference",

extra={

"camera": "camera-1",

"inference_ms": inf_ms,

"detections": n_boxes,

"mean_confidence": mean_conf,

},

)Per camera, send to your metric system:

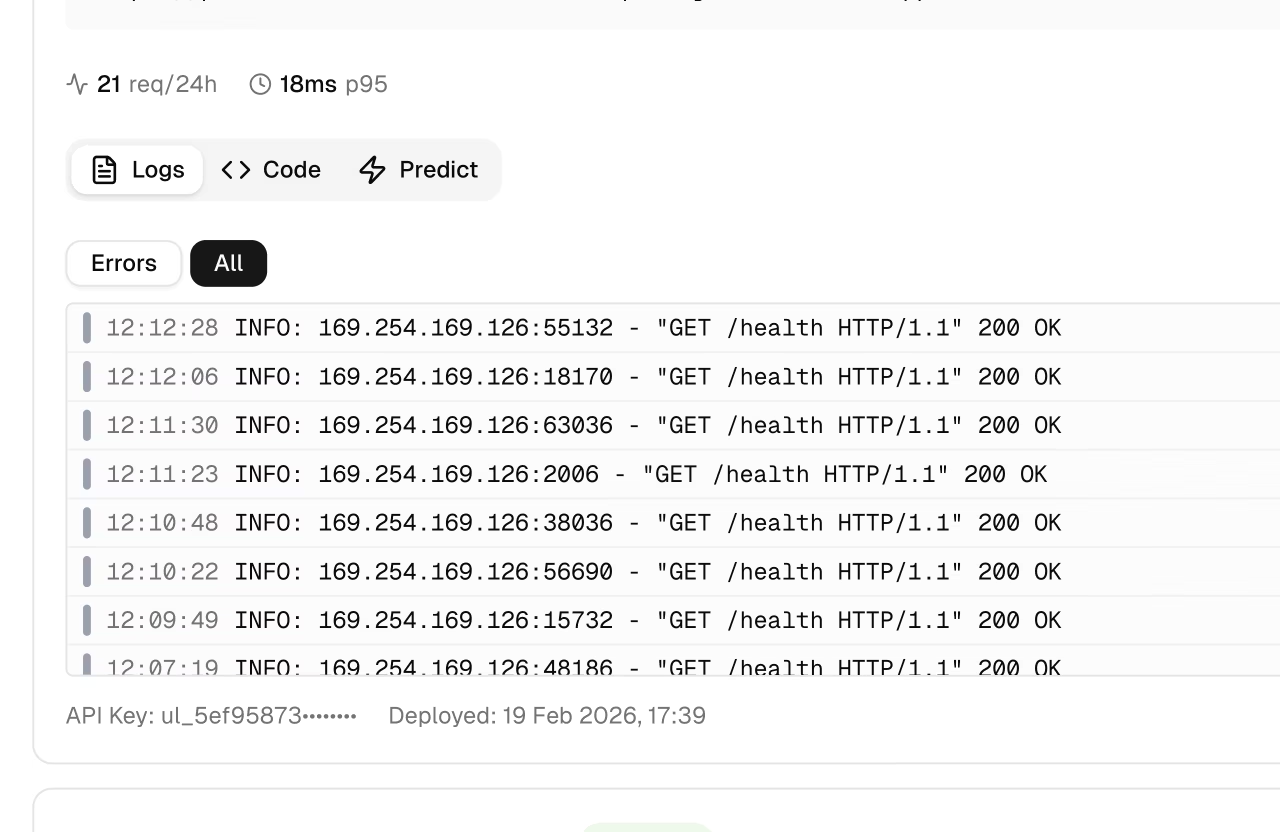

- inference_ms — inference latency. Alert on p95 above your budget.

- detections — count of returned boxes. Alert on a sudden drop or surge — a cheap anomaly detection signal.

- mean_confidence — distributional signal. A drift down often means domain change before mAP does.

- dropped_frames — capacity signal. > 0 means the system is overloaded.

The weekly holdout check

Define a small holdout set — 200–500 production-realistic labeled images, sampled before launch — and validate against it weekly:

yolo val model=production.engine data=holdout.yaml > weekly_metrics.txtPipe the mAP into your metrics system. Alert when it drops by more than 1 mAP point from the previous week. The most common cause is a model swap that was supposed to be a no-op; the second most common is data drift (next section). A confusion matrix on the same holdout makes per-class regressions obvious.

The holdout has to look like current production. A holdout sampled from January's data won't catch September's drift. Refresh the holdout every quarter with newly labeled production frames.

Distribution drift

The model's behavior changes when its inputs change — the textbook definition of data drift. Two cheap distributional signals:

- Confidence histogram. Plot a histogram of detection confidence weekly. If the distribution shifts left (fewer high-confidence detections, more uncertain ones), the input domain has drifted.

- Object size distribution. Plot box width × height. New camera mounts, new optics, weather conditions all show up here.

A Kolmogorov-Smirnov test compares two distributions and gives a p-value. A p-value < 0.01 means "these distributions are reliably different" — a useful trigger for investigation, and often the input to an active learning loop or a continual learning refresh.

from scipy.stats import ks_2samp

import numpy as np

baseline = np.load("baseline_confidences.npy") # collected at deploy

recent = np.load("recent_confidences.npy") # last 24 hours

statistic, p_value = ks_2samp(baseline, recent)

if p_value < 0.01:

print(f"DRIFT: KS={statistic:.3f}, p={p_value:.4f}")What to alert on

Be selective. Alerts that fire weekly without action become noise.

| Signal | Threshold | Rationale |

|---|---|---|

| p95 inference_ms > budget | 3 consecutive minutes | Real latency regression, not blip |

| Detection rate drops > 50% | 5 minutes | Model returning empty / something broke |

| Holdout mAP drops > 1.0 | Weekly | Real accuracy regression |

| Confidence KS p < 0.01 | Daily | Distribution drift |

| Dropped frames > 5% | 10 minutes | Capacity bottleneck |

The discipline: every alert should map to a concrete next action. If you can't say what an on-call would do when the alert fires, the alert is noise.

Add per-frame logging (inference_ms, detection count, mean confidence) to your tracking loop. Stand up a small Grafana / Datadog / whatever you use — and watch the graphs for an hour. The shape of the lines tells you more than any individual number.

You log inference latency, detection volume, and mean confidence per frame.

You have a weekly holdout validation job and an alert if mAP drops > 1.

You have a distribution-drift signal (confidence histogram or KS test).

Last lesson: cost and latency tuning — the levers you'll actually pull when the dashboards say something's off.