Optimize with TensorRT

FP16 and INT8 — when each one wins, and what to test before you ship.

TensorRT is the largest single performance improvement available on NVIDIA hardware. It compiles your model into a kernel-fused engine for the exact GPU it'll run on. The cost: engines are device-specific and quantization needs care. The reward: 2–8× speedups on production hardware — and the lowest possible inference latency.

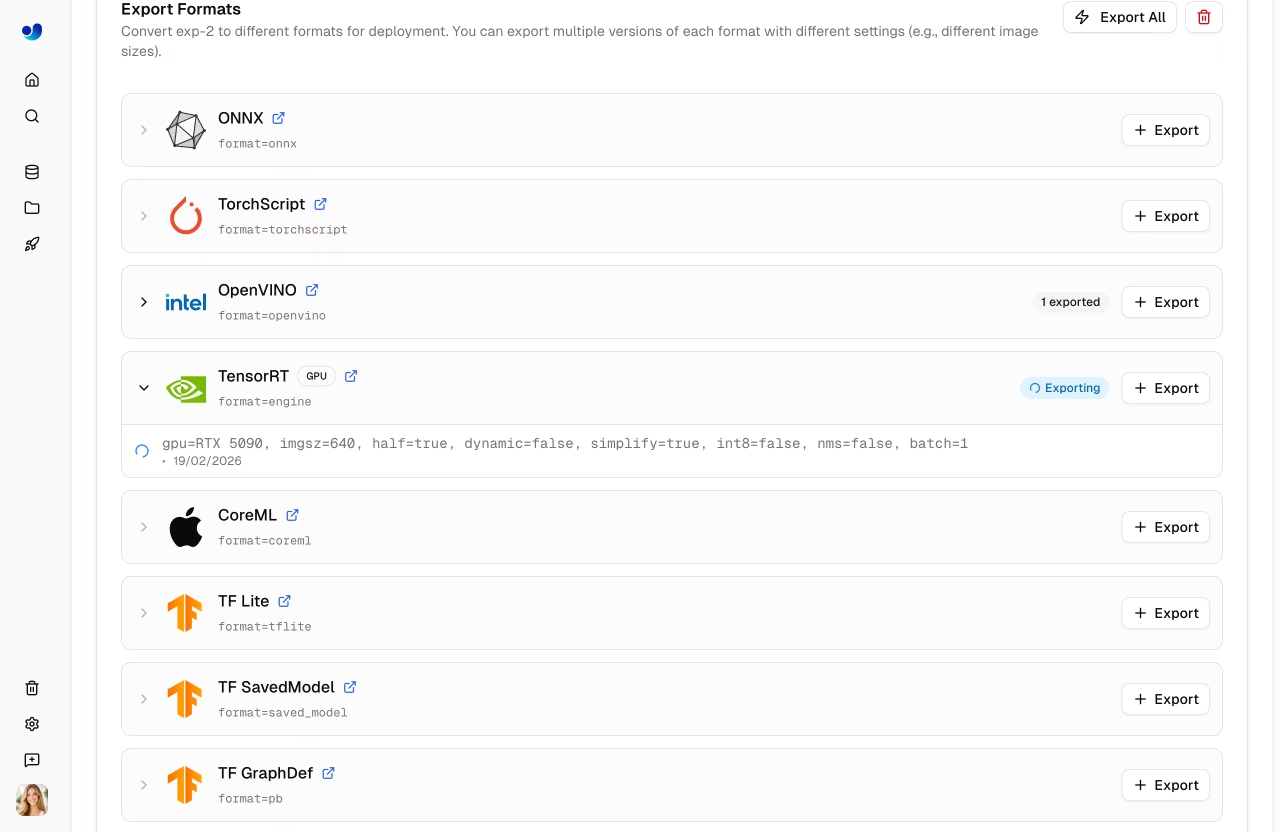

Build a TensorRT engine in FP16 or INT8 and verify the engine matches the source model on accuracy and latency.

FP16 first:

model.export(format='engine', half=True).INT8 needs calibration data:

model.export(format='engine', int8=True, data='my_dataset/data.yaml').Always benchmark on the target GPU after export.

Verify mAP within 0.5 of the source.

Hands-on

FP16 is the safe speedup

FP16 (half-precision / mixed precision) is the biggest free lunch in TensorRT optimization. Most NVIDIA GPUs from Volta onward have dedicated FP16 (and BF16) tensor cores. Compared to FP32:

- ~2× faster inference.

- ~50% smaller engine.

- ~0.1–0.3 mAP points lost on most CV tasks (often within noise).

from ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/best.pt")

model.export(

format="engine",

half=True, # FP16

imgsz=640, # static for max performance

workspace=4, # GiB workspace for TRT to use

)If FP16 mAP is acceptable, ship it. Most teams stop here.

INT8: 2× faster again, with calibration

INT8 quantization can roughly double FP16's speedup, but it requires calibration. The exporter samples representative images, observes activations, and chooses 8-bit quantization ranges that minimize accuracy loss.

model.export(

format="engine",

int8=True,

data="my_dataset/data.yaml", # calibration uses the dataset's val set

imgsz=640,

)Without good calibration, INT8 can drop 5–10 mAP points. With good calibration, often less than 1.

Calibration on COCO and deploying on warehouse cameras gives you a great COCO-quantized model with poor warehouse activations. Always calibrate with a held-out chunk of your validation set — at least 200–500 images representative of production conditions.

Verify before shipping

The verification matrix:

| Check | Threshold |

|---|---|

| mAP@0.5:0.95 | within 0.5 of source FP32 |

| Top-class AP | within 1.0 (smaller per-class data, more variance) |

| p95 latency | meets your budget |

| Determinism | run inference twice, same image — exact same boxes (or known stochastic source) |

yolo val model=runs/detect/forklift_v1/weights/best.engine data=my_dataset/data.yamlIf you lose more than 0.5 mAP on FP16 or 1.0 on INT8, re-export with more workspace, larger calibration set, or fall back one precision level. Sweep formats with Benchmark mode to see the trade-off in one table.

Latency: where the speedup actually lives

The biggest TensorRT wins come from kernel fusion — TRT collapses many small CUDA kernels into one. The wins are concentrated in:

- Conv + BN + activation chains (very common in Ultralytics YOLO).

- Attention blocks in attention-based variants.

- The detection head's many small ops.

Profile with trtexec or NVIDIA Nsight to see which layers dominate. Often a single layer is 30%+ of latency and tuning it (different precision, different layout) is the highest-leverage optimization.

Build engines on the target

A TensorRT engine compiled on an A100 will not run on a Jetson Orin. You have two options:

- Build per device. First-boot delay (10–60s) but minimal infrastructure.

- Build per device class on a build farm. Lower first-boot delay; more complex pipeline.

Most production fleets do (1) for simplicity and accept the cold start. Once builds are stable, DeepStream on Jetson and Triton Inference Server are the natural homes for the resulting engines.

Build an FP16 engine with half=True. Run yolo benchmark on it. Note fps and mAP. If both are acceptable, you're done. If you need more speed, try INT8 with calibration data.

FP16 engine builds without errors.

Validation mAP on the engine is within 0.5 of the source.

Latency on the target GPU meets your budget.

Show solution

from ultralytics import YOLO

model = YOLO("runs/detect/forklift_v1/weights/best.pt")

# FP16

model.export(format="engine", half=True, imgsz=640, workspace=4)

metrics = YOLO("runs/detect/forklift_v1/weights/best.engine").val()

print(f"Engine mAP@0.5:0.95 = {metrics.box.map:.3f}")

# Optional INT8 if more speed needed

# model.export(format="engine", int8=True, data="my_dataset/data.yaml", workspace=4)