Collect High-Quality, Representative Data

Capture real production conditions, not staged-only images — and avoid the collection biases that quietly kill models.

Most dataset failures are collection failures. The team captures clean, well-lit examples on a single camera in one week, then the model fails in production on the long tail. Quality over quantity, but variance over both.

Collect or source images that mirror real deployment conditions, with diversity across camera, time, geography, and operator — and an audit log to prove it.

Capture from production cameras when possible — not staged setups.

Spread collection across days, shifts, and weather, not one heroic session.

Mix sources: live capture, archived footage, public datasets, and (sparingly) synthetic.

Log every image's metadata (camera, time, location) for traceability.

Hands-on

Quality over quantity — and variance over both

A useful rule from the Ultralytics training-results guidance: a model's ceiling is set by the worst-represented scenario in its training data. So:

- 10,000 nearly-identical images from one camera at noon → great metrics on the val set, terrible on a different camera at dusk.

- 1,500 images that span 12 scenarios → modest val metrics, but they generalize.

Optimize for variance first, volume second.

Source mix

Mix these sources deliberately — see the data collection and annotation guide for deeper coverage:

| Source | When it helps | When it hurts |

|---|---|---|

| Production cameras (live) | Closest to deployment; ground truth | Slow; may need data agreements |

| Production cameras (archived) | Lots of variance for free | Old footage may not match current setup |

| Public datasets (COCO, Open Images, VOC) | Bootstrap quickly; add diversity | Domain shift from your real scenes |

| Web scraping | Cheap variance | Inconsistent quality, licensing risk |

| Synthetic data | Fill rare classes (faults, hazards) | Overfit to renderer's style |

| Staged captures | Edge cases you can't wait for | Easy to over-rely on |

A common production blend: 70% live/archived production data, 20% staged edge cases, 10% public-dataset background diversity.

Common collection pitfalls

The failure modes that show up over and over:

| Pitfall | Symptom | Fix |

|---|---|---|

| Single-day collection | High val mAP, drops at dusk in prod | Spread across ≥ 7 days, ideally a full month |

| One camera | Model fails on second camera | Capture from ≥ 3 different cameras |

| Staged-only data | Model fails on real chaos | At least 50% from genuine production |

| Duplicate frames | Inflated metrics, leaked val | Dedup at upload (Ultralytics Platform does this with content hashing) |

| Biased operator | Class imbalance you didn't plan for | Sample across shifts, sites, and people |

| Missing rare cases | Model misses safety events | Stratified sampling or synthetic top-up |

| Time correlation | Val frames ~1s after train frames | Split by day or scene, not random per-image |

Bias is a collection problem, not a modeling problem

A biased dataset produces a biased model — and you can't fix it later with hyperparameters. The data collection guide on bias calls out four levers:

- Diverse sources — don't collect from one site, one shift, or one team.

- Balanced representation — across age, gender, ethnicity, geography (where applicable to your task).

- Continuous monitoring — re-audit the dataset every few weeks for drift in coverage.

- Mitigation techniques — oversample rare classes, augment heavily on minority groups, fairness-aware sampling.

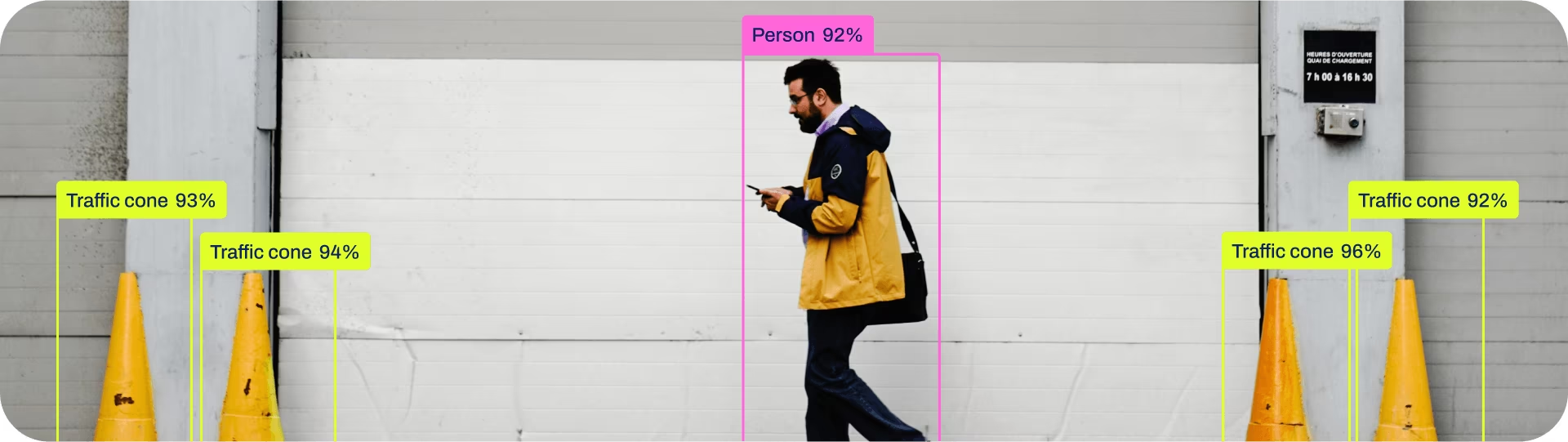

For face / person detection in particular, ethical collection matters as much as technical correctness — see the AI ethics glossary entry.

Log everything you capture

Every image should carry, at minimum: camera ID, timestamp, location, and any operator-relevant metadata (shift, weather, conditions). Without this you can't:

- Stratify splits later (lesson 6).

- Diagnose a regression to a specific source.

- Re-collect more of an under-represented scenario.

Ultralytics Platform preserves this metadata as you upload; for self-hosted pipelines, encode it in filenames or a sidecar JSON. Either way: don't drop it.

Ethics and consent

If your data includes faces, license plates, voices, or any biometric / PII data, you'll need consent and a retention policy. The data privacy glossary entry is the right place to start, and the regions / compliance lesson in the Build with Ultralytics Platform course covers dataRegion for keeping data in the right jurisdiction.

Audit your last 200 collected images. For each, check: do you know the camera, the time, and the location? If not, your collection metadata pipeline needs fixing before scaling.

≥ 50% of your dataset comes from real production sources.

You have images from at least 3 different cameras / capture conditions.

Every image has at least camera + timestamp metadata.

You've checked for duplicates and removed them before annotation.

Pixels in hand. Next: turn them into labels — consistently, the first time.