Start With the Business Objective

Translate a business goal into a vision task, a class list, and a success metric before any image is collected.

Every dataset that fails in production fails for the same reason: the team started collecting images before they finished arguing about what the model was actually supposed to do. The cure is a written objective. Done in 30 minutes; saves months. Aligns with the steps of a CV project guide.

Write a one-page objective that names the business goal, the vision task, the classes, and the metric that defines success.

Business goal in one sentence.

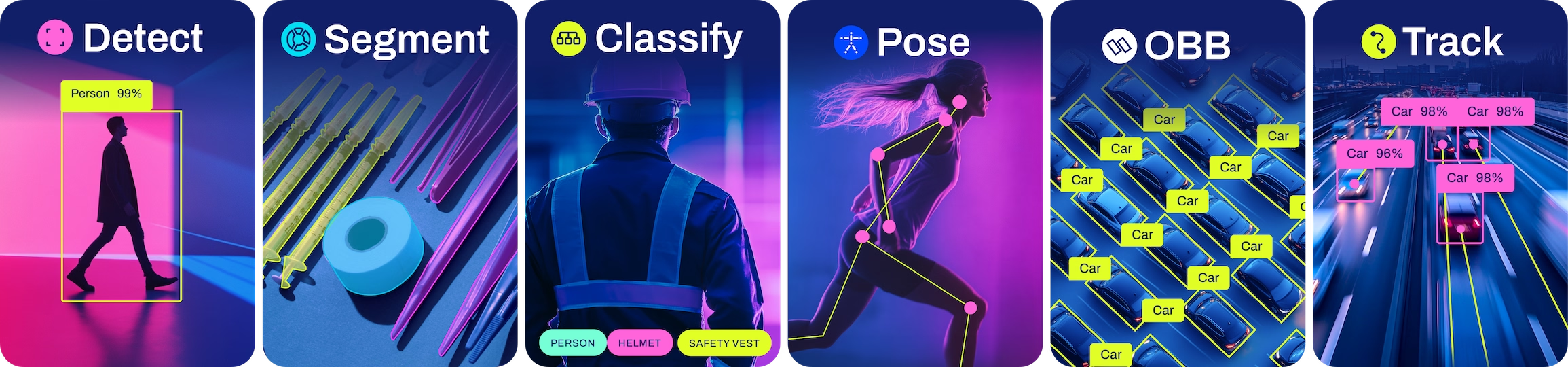

Vision task: detect / segment / classify / pose / OBB.

Final class list (3–10 classes is a good first target).

Success metric: e.g. mAP@0.5 ≥ 0.80 on the holdout set, or recall ≥ 0.95 on safety class.

Hands-on

The four-line spec

A vision project that ships answers four questions in writing — see defining project goals and the broader data collection and annotation guide:

| Field | Example |

|---|---|

| Business goal | "Reduce dock-door incidents by 30% in 6 months." |

| Vision task | Object detection — boxes for forklifts and people. |

| Classes | forklift, person, pallet (background = no label). |

| Success metric | mAP@0.5 ≥ 0.80 and recall on person ≥ 0.95 on a holdout from cameras the model has never seen. |

Pin these to a wiki. Every dataset and labeling decision below traces back to this page.

Picking the task is picking the labels

Each vision task implies a different annotation cost — see Ultralytics YOLO tasks for definitions:

| Task | Labels | Relative annotation cost |

|---|---|---|

| Classification | One label per image | 1× |

| Detection | Class + axis-aligned box per object | 3–5× |

| OBB | Class + rotated box | 4–6× |

| Pose | Class + keypoints per object | 5–8× |

| Segmentation | Class + pixel mask per object | 8–15× |

Pick the simplest task that solves the business problem. Most teams reach for segmentation when detection would do — and run out of annotation budget. The CV Foundations course covers the task-picking decision tree in detail.

Coarse vs. fine class counts

When you write the class list, decide between coarse and fine granularity:

- Coarse ("vehicle", "non-vehicle"): cheaper to label, faster to converge, less informative.

- Fine ("sedan", "SUV", "pickup", "motorcycle"): more useful downstream, more expensive, harder to keep consistent.

Start with what the business actually needs. You can always merge fine classes into coarser groups later (one line of code); you can't split coarse labels back into fine ones without re-labeling.

"Anything that looks dangerous" or "stuff blocking the aisle" is not a class — it's a request for a judgment call from the annotator. Models can't learn from inconsistent judgment. If the class is hard to write down, it'll be impossible to label.

Define the metric before you train

Pick the metric that matches the business goal. A safety system cares about recall on the safety class; a counting system cares about per-class AP balanced; a sorting system cares about precision so you don't ship the wrong item. The YOLO performance metrics guide covers what each metric means.

A useful exercise: write the acceptance test in plain English. Example: "On 500 frames from cameras the model has never seen, recall on person must be ≥ 0.95 with precision ≥ 0.85." That sentence drives the rest of the course.

Write your project's four-line spec on a single page: business goal, vision task, class list, success metric. Share it with the stakeholder who's paying for the model. If they push back on any line, that's the line to fix before doing anything else.

Your business goal fits in one sentence.

You've picked exactly one vision task.

Your class list is 3–10 specific, non-overlapping classes (or you've written down why you need more).

Your success metric is a number, on a holdout, with a precision/recall constraint.

We have a target. Next: write the dataset spec that says what images we need to hit it.