Enterprise Client Checklist

A reusable, customer-facing checklist that CS, Platform, Docs, and Academy can all point to.

The hardest part of a CV project isn't the model — it's repeatability. This checklist is the artifact you give a customer at the start of every project, and the artifact you tick off before every retrain. It synthesizes everything from the previous nine lessons into a one-page document.

Hand the customer (or your future self) a single page that captures every dataset decision and gates the path to a production model.

One page. Print it, share it, gate every project on it.

Nine questions. Each must be answered before any pixel is captured.

Re-run before every retrain — projects evolve, the answers change.

Use it as the agenda for the customer kickoff.

Hands-on

The Dataset Readiness Checklist

Reusable across CS, Sales Enablement, Platform onboarding, and Academy. Print it, share it, and gate every project on it. The questions below come straight from the data collection and annotation and steps of a CV project guides — collapsed into one page that a non-engineer can run.

# Ultralytics — Dataset Readiness Checklist

Project: ____________________________________________________

Owner: ____________________________________________________

Date: ____________________________________________________

## 1. What are we detecting?

Vision task: [ ] detect [ ] segment [ ] classify [ ] pose [ ] OBB

Class list: ___________________________________________

Class definitions written? [ ] yes [ ] no

## 2. Where will the model run?

[ ] Cloud GPU [ ] Cloud CPU [ ] Edge (Jetson/Pi) [ ] Mobile [ ] Browser

Latency target: ____ ms Min hardware: __________________

## 3. What visual variation exists?

Times of day: _______________________________________

Weather: _______________________________________

Cameras / sites: _______________________________________

Operators: _______________________________________

Product variants:_______________________________________

## 4. What edge cases matter?

_______________________________________________________

_______________________________________________________

## 5. Do we have negative examples?

[ ] Yes — ___% of dataset is background images (target 0–10%)

[ ] No — collect before training

## 6. Are labels consistent?

[ ] Labeling guide written

[ ] Calibration round done; inter-rater agreement ___%

[ ] QC report attached

## 7. Did we check for leakage?

Split unit: [ ] image [ ] scene [ ] camera [ ] day [ ] location

No scene / camera / day appears in more than one split? [ ] yes [ ] no

## 8. Is the test set truly unseen?

[ ] Locked. Touched only once for go/no-go.

[ ] Still being tuned against — STOP, re-split.

## 9. What performance metric defines success?

Metric: ______________________ Target: ____________

Acceptance test: _____________________________________

---

Volume targets met?

[ ] ≥ 1500 images / class

[ ] ≥ 10,000 labeled instances / class

[ ] OR documented exception: ________________________

Ready to fine-tune Ultralytics YOLO26?

[ ] Yes — start with the nano checkpoint matching the task selected in #1

(detect: `yolo26n.pt` / segment: `yolo26n-seg.pt` / pose: `yolo26n-pose.pt`

/ OBB: `yolo26n-obb.pt` / classify: `yolo26n-cls.pt`)

[ ] No — fix item ___ firstHow to use it

At kickoff. Walk through it with the customer or stakeholder. Item 9 (success metric) is usually the one that surfaces real disagreement; spending an hour on it saves the project.

Before collection. Items 1–4 set the dataset spec. Don't capture data without them filled in.

Before annotation. Items 5–6 gate the labeling stage.

Before training. Items 7–8 plus the volume targets gate the first training run.

Before retraining. Re-run the whole thing. New classes, new collection, new annotators — each can break a previously-passing item.

Why a single page

The checklist works because it fits on a single page and uses words a non-engineer can verify. Customer success can run it without engineering present. Sales enablement can use it in pre-sales conversations. Platform onboarding can attach it to a workspace. Docs can link to it. Academy gates the certificate on it.

Same artifact, every team. That's the point.

What this course got you

You can now:

- Translate a business goal into a vision task and class list.

- Write a dataset specification before collecting.

- Plan a representative collection across cameras, times, weather, and operators.

- Annotate consistently with a written guide.

- Run a structured QC pass.

- Split cleanly without leaking.

- Use augmentation that reflects deployment.

- Decide objectively whether the dataset is ready.

- Run the first fine-tune and read its results.

- Hand a customer or your team the checklist.

The next time someone says "we're thinking of a CV project," you have the conversation, the spec, and the checklist on day one — not week six.

Where to go next

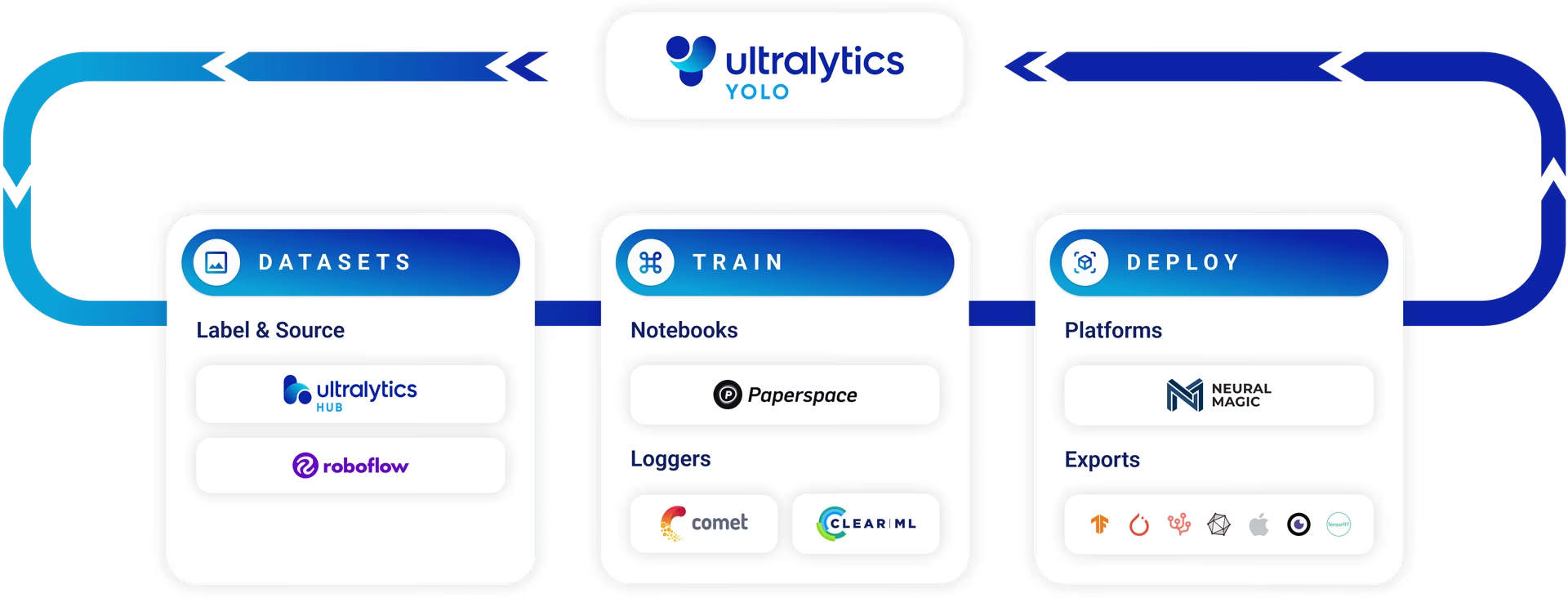

- Train your first YOLO model — convert your ready dataset into the YOLO label format, write the

data.yaml, and runmodel.train(...). - Fine-tuning guide — the deeper reference for layer freezing, two-stage training, and optimizer choice once defaults aren't enough.

- Build with Ultralytics Platform — manage the full lifecycle (dataset → cloud training → endpoint → monitoring → retrain) in one place.

- Ultralytics YOLO in Production — export, serve multi-stream, and observe the model after deployment.

Print the checklist. Walk through it with one project — your own, or a customer's. Note which items get a clear yes, which get a no, which get a "we don't know yet". The "don't knows" are tomorrow's project plan.

You've completed the checklist for at least one project.

Every item has a yes, a no, or a documented exception.

The checklist is shared with the project's owner / customer.

Course complete — take the final quiz to earn your Building High-Performance YOLO Datasets certificate.