Use Augmentation Carefully

Augmentation extends variance — it doesn't replace real data. The rules for picking transforms that match deployment conditions.

Data augmentation is a force multiplier when it produces images that could plausibly happen at deployment. It's a rounding error — or worse — when it doesn't. The mental model: augmentation is synthetic variance, and synthetic variance only generalizes if it matches real-world variance.

Choose an augmentation profile that fits your deployment environment and reject augmentations that would never happen in the real world.

Augmentation extends variance; it doesn't replace real data.

Use defaults first — Ultralytics YOLO ships sensible per-task augmentation.

Match transforms to deployment: vertical flip is fine for top-down cameras, wrong for portraits.

Disable mosaic in the last 10 epochs (

close_mosaic=10) for cleaner final epochs.

Hands-on

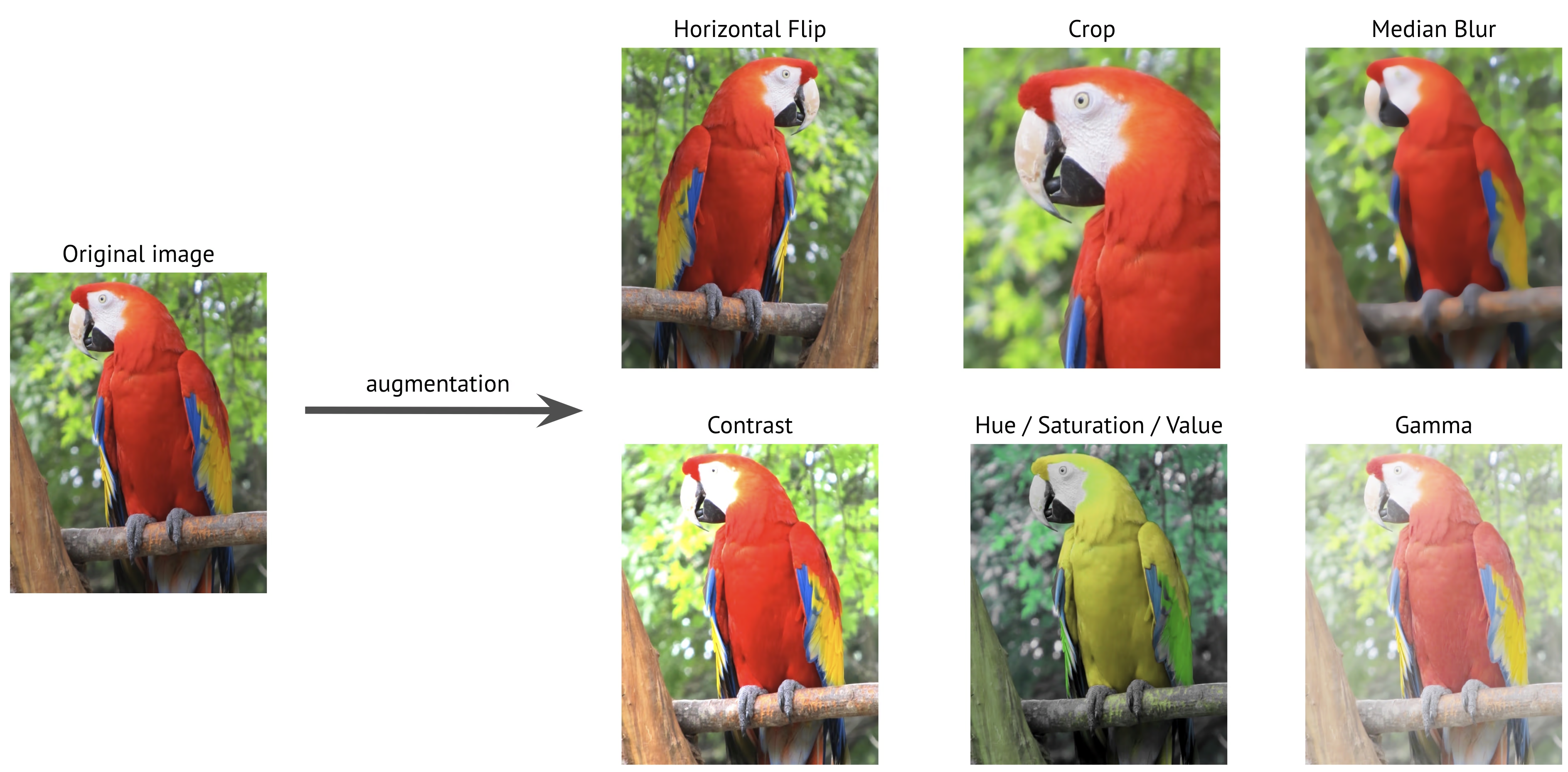

What augmentation actually does

Data augmentation — and the broader preprocessing annotated data guide — describes how synthetic variance is generated from your real images by transforming them on the fly during training. Done well it:

- Expands effective dataset size 5–20×.

- Improves robustness to lighting, scale, and orientation changes.

- Reduces overfitting — the model can't memorize images that change every epoch.

Done badly it:

- Teaches the model to recognize physically impossible scenes.

- Wastes GPU time generating noise.

- Hides dataset weaknesses you should be fixing.

Ultralytics YOLO defaults

Ultralytics YOLO ships sensible defaults — see the data augmentation guide for the full list. The augmentations you'll hear about most:

| Augmentation | Default | What it does | When to disable |

|---|---|---|---|

hsv_h / hsv_s / hsv_v | 0.015 / 0.7 / 0.4 | Color jitter | If color is a class signal (e.g. red vs. green box) |

degrees | 0.0 | Rotation | Already on by default for some tasks; set explicitly if needed |

translate | 0.1 | Random translate | Almost never disable |

scale | 0.5 | Zoom in/out | Almost never disable |

fliplr | 0.5 | Horizontal flip | If left/right matters (license plates, text) |

flipud | 0.0 | Vertical flip | Only enable for top-down / aerial / OBB tasks |

mosaic | 1.0 | 4-image grid | Disabled in final epochs via close_mosaic |

mixup | 0.0 | Blend two images | Helpful for small datasets; off by default |

copy_paste | 0.0 | Paste segmented objects | Segment-only; useful for rare classes |

Train with defaults first. They're tuned across thousands of datasets. Touch them only when you have evidence they're hurting.

The "would this happen in production?" test

Every augmentation you enable should pass one question: could the deployment environment ever produce this image?

| Augmentation | Production-realistic? | Verdict |

|---|---|---|

| Brightness ± 30% | Yes — different times of day | ✅ Enable |

| Horizontal flip on a security camera | Yes — opposite-handed people exist | ✅ Enable |

| Vertical flip on a portrait | No — gravity is real | ❌ Disable |

| 90° rotation on a road camera | No — cameras don't rotate | ❌ Disable |

| Heavy blur on a 4K camera | Maybe — fog / motion blur | ⚠️ Limit |

| Grayscale on a color-coded task | No — destroys signal | ❌ Disable |

Augmentations that fail this test produce negative training signal — the model learns to recognize images it'll never see, at the cost of capacity for ones it will.

Mosaic and close-mosaic

Mosaic stitches 4 images into one and is enabled by default — it's the highest-leverage augmentation for object detection because it forces the model to learn from many small contexts at once.

The catch: mosaic produces images that don't look like deployment frames. Train most of the run with mosaic on, then disable it for the final 10 epochs to let the model stabilize on real-shape inputs:

from ultralytics import YOLO

model = YOLO("yolo26n.pt")

model.train(

data="data.yaml",

epochs=100,

close_mosaic=10, # disable mosaic in final 10 epochs

)This is on by default; only adjust if you have a very short total run or a very long one.

Augmentation is not a replacement for real data

A common anti-pattern: dataset is too small, team cranks every augmentation knob to its max, expects the model to fill the gap. It won't.

Augmentation is a multiplier on what's already there. If your dataset has zero examples of nighttime, no augmentation produces nighttime — it produces brighter and dimmer versions of daytime. The fix is collection, not augmentation.

If the augmentation has to be aggressive to make a class work, you don't have enough of that class. Go collect more.

Validate by eye

Open a few augmented training samples — most labeling tools and Ultralytics YOLO will save mosaiced batches at the start of training (train_batch0.jpg). Look for:

- Boxes still tight after the transform.

- Class-distinctive features still visible (color, shape, text).

- No physically impossible images.

If anything looks wrong, scale back the transform that caused it.

List your augmentation profile next to your deployment scenarios. For each transform, write "production-realistic: yes/no". Disable everything that fails the test. Re-train. Note the change in val mAP — usually it goes up.

You've trained at least one run with default augmentation.

You've removed any transforms that produce unrealistic deployment images.

You've kept

close_mosaicenabled (default) for the final epochs.Visual review of

train_batch*.jpgshows realistic, well-labeled augmentations.

Dataset, splits, augmentation — all in place. Next: confirm we're actually ready to train.