Dataset Quality Control

Find missing labels, wrong classes, sloppy boxes, duplicates, and class imbalance — before you train.

Fixing labels usually improves performance more than tuning hyperparameters. The QC pass is where you find what the labeling guide missed and where you confirm the dataset's distribution matches your spec. Skipping QC is how teams burn a week of GPU time on a fixable label bug.

Run a structured QC pass on the labeled dataset, fix what's broken, and document the result.

Visual review — open the dataset gallery and skim 100+ random samples.

Coverage check — every relevant object labeled in every image.

Class histogram — flag imbalanced classes for collection or oversampling.

Leakage check — same scene / camera / day shouldn't span multiple splits.

Hands-on

The seven-check QC pass

Run all seven before any training run. None of them take more than 10 minutes — the preprocessing annotated data guide covers many of these in detail:

| # | Check | What you're looking for | Tools |

|---|---|---|---|

| 1 | Missing labels | Images where annotators forgot some objects | Visual review, low-detection-count outliers |

| 2 | Wrong classes | Class indices swapped, mislabeled instances | Confusion in val confusion matrix; random sample audits |

| 3 | Loose / tight boxes | Boxes that don't closely enclose objects | Visual review; small box-to-object ratio anomalies |

| 4 | Duplicate images | Near-identical frames split across train/val | Perceptual hash + content hash (Platform does this automatically) |

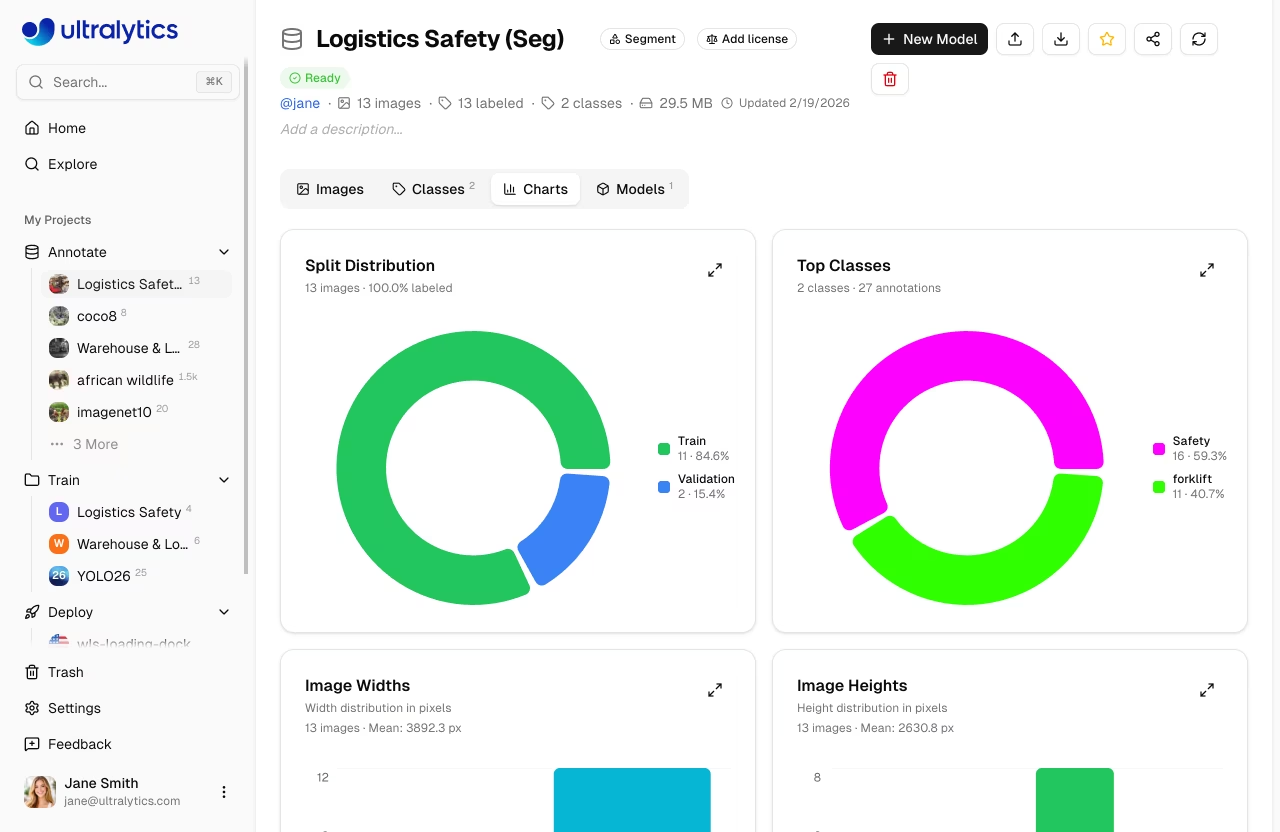

| 5 | Class imbalance | One class dominates, another has < 100 instances | Charts tab class histogram |

| 6 | Split leakage | Same scene / camera / day appears in train AND val/test | Group-by metadata before splitting (lesson 6) |

| 7 | Background images | 0–10% of dataset is unlabeled "no objects here" frames | Count images with zero labels |

Spot-check by sampling

You don't need to review every image. A 100-sample stratified audit catches systemic issues:

- 10 from each class, picked at random.

- 10 background images.

- 10 "edge cases" from your spec.

- The lowest 10 confidence predictions from a quick pretrained-model run on the train set.

The last bucket is gold: it surfaces the images the model finds unusual — usually mislabeled or genuinely odd.

Class balance: what to do when it's broken

Real datasets are imbalanced. The question is whether it'll hurt training.

forklift ████████████████████████ 8000 instances

person ████████████████ 5400

pallet ██ 700 ← red flagA 10:1 imbalance like the one above will produce a forklift-confident model that misses pallets. Three fixes, in order of preference:

- Collect more pallets. Always the best answer. Aim for ≥ 1500 images and ≥ 10,000 labeled instances of every class, not just the easy ones.

- Oversample at training time. Augment minority-class images more aggressively so each epoch sees them more often.

- Class-weighted loss (advanced). Don't reach for this until 1 and 2 are exhausted.

Dedup once more, after labeling

Even after upload-time dedup, post-labeling dedup catches a different kind of duplicate: visually distinct frames with identical labels. They're usually:

- Two camera angles of the same event with the same labels.

- Sequential video frames where nothing moved.

Drop them — they pad volume without adding training signal.

The leakage check is not optional

Random per-image splits leak whenever images are correlated (same scene, same camera, same day). Lesson 6 covers the splitting strategy in depth — but the QC version of the question is:

Does the same scene / camera / day appear in both my train and val splits?

If yes, your val mAP is overstating reality, and the model that ships will be worse than the one you measured. The fix is a grouped split — train and val never share a scene-key. The preprocessing annotated data guide walks through the mechanics; the splits lesson next covers the strategy.

Why fixing labels beats tuning hyperparameters

A common pattern: model val mAP is 0.62, team spends two weeks on hyperparameter tuning, mAP gets to 0.64. Then someone audits the data and finds 8% of forklift labels are mis-classified as pallet. They fix the labels and retrain — mAP jumps to 0.78.

This is the rule of thumb: before changing anything else, fix the data. The fine-tuning guide, the YOLO performance metrics guide, and the preprocessing annotated data guide all reinforce it. Models are good at generalizing from clean signal; they can't generalize from noise.

Document the QC result

Before training, write a one-page QC report:

Dataset: forklift_v1

Audit date: 2026-05-09

Total images: 4,820 | Total labels: 18,442

Background images: 380 (7.9%)

Per-class instances:

forklift 6,801

person 8,310

pallet 3,331

Visual audit: 120 images sampled, 4 mislabeled (3.3%), fixed.

Duplicates removed: 312

Leakage check: split-by-day, no scene overlap.

Class imbalance: pallet ~2.5× lower than person; ok, not collecting more this round.

Ready to train: yes.This report is also the artifact you hand to the auditor or the customer. "Yes, we audited the dataset" with no document is not the same as "yes, here's the audit."

Run the seven checks on your dataset. Find at least one issue (you will). Fix it, then re-run. Write the QC report — one page, the format above.

All seven QC checks pass or have a documented exception.

Class histogram is acceptable (no class < 1500 images / 10,000 instances, or you've documented why).

Background images are 0–10% of the dataset.

You have a written one-page QC report ready to hand off.

Clean dataset. Next: split it without leaking the test set into your val mAP.