Split the Dataset Correctly

Train, val, and test — and the leakage rules that decide whether your metrics are honest.

A bad split is worse than no split — it gives you a number you trust that isn't true. The split decision is one-way: every metric you'll ever publish about this model is tied to it. Get it right once, and never touch it again.

Produce train / val / test splits with no leakage, balanced class distributions, and documentation that future-you (or an auditor) can verify.

Baseline: 70% train, 15% val, 15% test (of objects, not images).

Split by the right unit — scene, camera, day, location — not always by image.

Stratify class proportions across splits.

Lock the test set away. Touch it once at the end, not weekly.

Hands-on

The 70/15/15 baseline

For most enterprise projects, a sensible starting split is:

| Split | Share | Purpose |

|---|---|---|

| Train | 70% | Model learns weights from this |

| Val | 15% | Pick best epoch, tune hyperparameters, compare runs |

| Test | 15% | Final go/no-go before deployment — touch once |

Adjust if your dataset is small (more weight on train), or if you want a stricter regression suite (more weight on test). The exact percentages matter less than the discipline.

Train ~70%, val ~15%, test ~15% — measured in objects, not just images.

Split by the right unit

This is the lesson most teams learn the hard way. Random per-image splits leak when frames are correlated:

bad: random per-image split

┌──────────────────────────────────────┐

│ video1-frame-0124 → TRAIN │

│ video1-frame-0125 → VAL ← leaked!

│ video1-frame-0126 → TRAIN │

└──────────────────────────────────────┘

val mAP looks great. production mAP doesn't.

good: split by scene (group all frames from one video)

┌──────────────────────────────────────┐

│ video1 → TRAIN (all 1800 frames) │

│ video2 → VAL (all 1500 frames) │

│ video3 → TEST (all 2200 frames) │

└──────────────────────────────────────┘Pick the split unit that matches the generalization you care about at deployment:

| Deployment generalization | Split by |

|---|---|

| Same camera, new days | Day |

| Same site, new cameras | Camera |

| Same product line, new sites | Location |

| New customer entirely | Tenant / customer |

| Video-based detection | Scene / source video |

When in doubt, split by the most general unit — it gives the most honest metrics.

Stratify class distributions

Random splits can starve a small class. With 70 pallet labels, a 70/15/15 split might land 7 pallets in val — too few for a meaningful AP. Stratification fixes this by forcing class proportions to match across splits:

without stratification: with stratification:

train: 50 pallets / 4500 imgs train: 49 pallets / 4500 imgs

val: 7 pallets / 900 imgs val: 11 pallets / 900 imgs

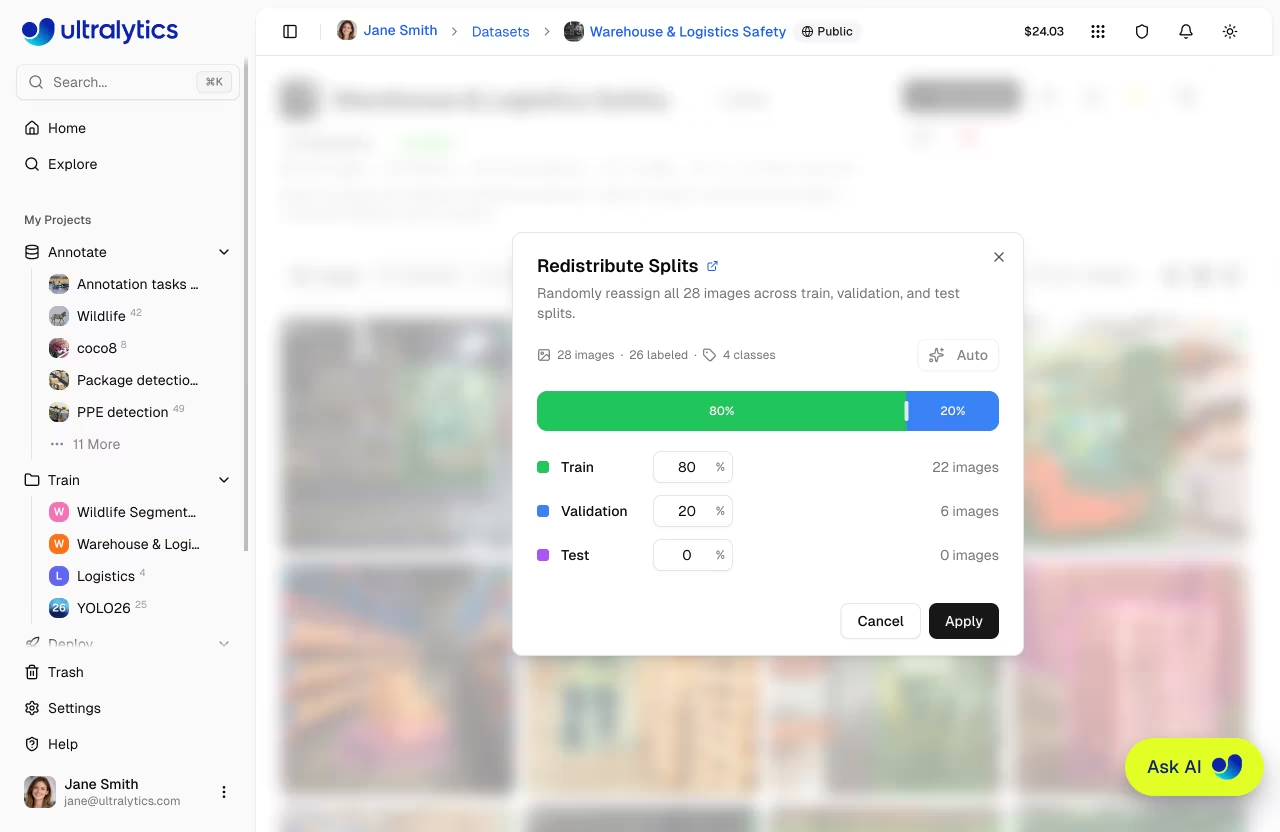

test: 13 pallets / 900 imgs test: 10 pallets / 900 imgsMost modern tooling (Ultralytics Platform, scikit-learn, Roboflow) does stratified splits with one toggle.

Multiple validation sets

The Ultralytics YOLO data YAML accepts a list of val sets — useful when you want both a curated regression set and a fresh production-realistic one:

path: ./datasets/forklift

train: images/train

val:

- images/val_curated # stable, your regression suite

- images/val_production # rotates with newly collected frames

test: images/testThe curated set tracks regressions; the production-realistic set tracks domain shift.

Lock the test set

The single most expensive mistake: comparing 10 model variants on the test set. Once the test set has informed any decision, it's training data, and your final number is dishonest. Two rules:

- All hyperparameter tuning and run comparison happens on val.

- Test is touched once, at the end, for a single go/no-go decision.

If you need richer comparisons, keep multiple val sets — never multiple touches of test.

Once you've trained against a split, every metric you publish is tied to it. Changing the split changes every number. Decide carefully, document the decision, and don't quietly re-shuffle later.

k-fold cross-validation for small datasets

If your dataset is small (< 500 images / class) and a single 70/15/15 split feels statistically flimsy, k-fold cross-validation trains k models on k different splits and averages the metric — see also the cross-validation glossary entry. Costs k× the GPU time; gives a much more reliable mAP estimate. Worth it for small, expensive datasets.

For more on splitting mechanics — folder layout, stratified sampling, and how to encode the split decision in a data.yaml — see the preprocessing annotated data guide.

Pick the split unit for your project (image / scene / camera / day / location). Justify the choice in one sentence. Then create a stratified 70/15/15 split in your tool of choice, and confirm class proportions match across splits.

You've picked and documented the split unit.

Class proportions are within ±20% across train, val, and test.

No scene / camera / day appears in more than one split.

The test set is locked — only touched at the end.

Splits ready. Next: a careful look at augmentation — what to use, and what to not use.